Table of Contents

U.S. General Services

Administration

FY 2025 Governmentwide Section 508 Assessment

Message from the GSA Administrator

The General Services Administration (GSA) is submitting the Fiscal Year (FY) 2025 Governmentwide Section 508 Assessment as mandated by Public Law No. 117-328 (codified at 29 U.S.C. § 794d-1). GSA prepared this report, in consultation with the Office of Management and Budget (OMB) and the U.S. Access Board, and it addresses the report to the Senate Committees on Appropriations and Homeland Security and Governmental Affairs and the House Committees on Appropriations and Oversight and Government Reform.

GSA implemented significant changes to this year’s assessment to enhance its focus and impact. We transitioned from a compliance-driven activity to a more strategic framework that emphasizes high-priority accessibility and Section 508 efforts. Recognizing the administrative workload associated with data collections, OMB (with the U.S. Access Board and GSA’s consultation) intentionally streamlined the FY 2025 criteria to lessen this burden. Reflecting our dedication to governmental efficiency, this year’s assessment provides a strategic perspective on digital accessibility, information and communication technology (ICT), and IT modernization. By reducing the reporting burden, agencies can reallocate resources to advance significant ICT and provide more cost-effective and accessible digital solutions that benefit all users.

Over 70 Million U.S. adults reported having a disability. With over 2.23 billion federal website visits in the past month, it is essential for the government to provide high-quality digital products and services. This assessment builds on previous reports and takes a broad look at different factors to understand federal agencies compliance with Section 508 accessibility requirements, which lays the groundwork for future strategic planning and informed decision-making. With significant changes to the assessment criteria and shifts in the federal environment, FY 2025 establishes a new baseline for ICT accessibility throughout the federal government. GSA used responses from 212 agencies, parent agencies, and components to develop this assessment. More importantly, the agencies’ data will help GSA support them in pinpointing accessibility issues and identifying areas for improvement. This, in turn, will enhance the efficiency and accessibility of government technology and digital services.

We extend our gratitude to all agencies that participated in this collection. We encourage each agency to view this assessment as an opportunity for progress, concentrating on meaningful ICT accessibility improvements over the next year.

Respectfully submitted,

Edward C. Forst

Administrator

Executive Summary

The FY 2025 Governmentwide Section 508 Assessment establishes a new baseline for information and communication technology (ICT) accessibility across the federal government following significant revisions to the assessment criteria and changes in the federal digital environment. GSA developed this assessment using responses from 212 agencies, parent agencies, and components.

Highlighted Findings

Recommendations to Congress

Recommendations to Federal Agencies

Introduction & Background

Now in its third year, the Governmentwide Section 508 Assessment evaluates how well federal agencies provide accessible and usable information and communication technology (ICT) consistent with Section 508 requirements.

The FY 2025 assessment examines how federal agencies' Section 508 implementation and ICT accessibility outcomes are evolving and identifies governmentwide challenges and opportunities. Given significant changes to assessment criteria and the broader federal environment, FY 2025 establishes a new baseline for measuring accessibility outcomes across government, and the findings highlight how governance models, resource allocation, and implementation affect ICT accessibility.

This assessment applies to federal agencies subject Section 508, relying on OMB Circular A-11 Appendix C for definition of “agencies” and “components.” For FY 2025, GSA analyzed submissions from 212 agencies, parent agencies, and components, including 21 Chief Financial Officers (CFO) Act agencies, 152 components from 12 CFO Act agencies, and 39 small and independent agencies. Throughout this report, “agency” refers to agency-level or parent agency submissions, while “component” refers to subordinate organizational units that submitted component-level data.

In coordination with GSA and the U.S. Access Board, OMB streamlined the FY 2025 assessment criteria to focus on high-impact accessibility outcomes while reducing reporting burden. The criteria centered on four areas:

In previous assessments, agencies and components responded to the same criteria. This year, parent-level agencies included component data in their submissions. Components answered questions only from the perspective of their component and had the option to answer questions under the Acquisition and Procurement and Testing and Remediation categories only if they performed those activities independently of or in addition to their parent agency.

GSA's Reporting Requirement

Under 29 U.S.C. § 794d-1(b), GSA has a statutory requirement to provide an annual comprehensive assessment of Section 508 compliance across the federal government.

GSA Reporting Efforts:

Recent GSA Efforts to Support Section 508 Compliance

The following describes GSA’s efforts to help improve federal ICT accessibility since GSA’s last report to Congress.

Governmentwide Assessment-Related Actions

Guidance and Best Practices

Interagency Collaboration and Knowledge Sharing

Technical Assistance, Tools and Training

Upcoming GSA Efforts to Support Section 508 Compliance

The following describes upcoming GSA efforts to help improve federal ICT accessiblity.Governmentwide Assessment-Related Actions

Guidance and Best Practices

Interagency Collaboration and Knowledge Sharing

Technical Assistance, Tools, and Training

Governmentwide Findings

The size and composition of agencies that submitted data for the FY 2025 assessment provides context for interpreting governmentwide accessibility outcomes. Agencies self-reported their size based on estimated federal employee counts at the time of data submission; for this analysis, GSA reclassified one cabinet-level agency as a “very large agency” using January 2026 FedScope data. No other agency classifications were changed.

Sixty agencies submitted data, comprising:

The FY 2025 assessment asked agencies to respond to criteria that fell into four accessibility factors, grouped into two evaluation indices:

The assessment details each of these accessibility factors in later sections of the report and in Appendix A: Methods.

Findings

GSA evaluated responses to specific assessment criteria to generate an aggregated rating or outcome on a 5-point scale and determine how well an agency fared for each accessibility factor. GSA grouped outcomes into performance categories that ranged from Very Low to Very High, similar to previous years of this report. Table 1 below denotes the outcome scale ranges and corresponding performance categories.

| Outcome Range | Performance Category |

|---|---|

| 0 to 1 | Very Low |

| >1 to 2 | Low |

| >2 to 3 | Moderate |

| >3 to 4 | High |

| >4 to 5 | Very High |

| Factor | Average Outcome | Performance Category | Evaluation Indices |

|---|---|---|---|

| Policy Integration | 3.04 | High | Implementation (i-index) |

| ICT Acquisition and Procurement | 3.44 | High | Implementation (i-index) |

| Testing and Remediation | 2.00 | Low | Implementation (i-index) |

| Accessibility Conformance | 1.96 | Low | Conformance (c-index) |

Implementation-Conformance Relationship - Scatterplot Analysis

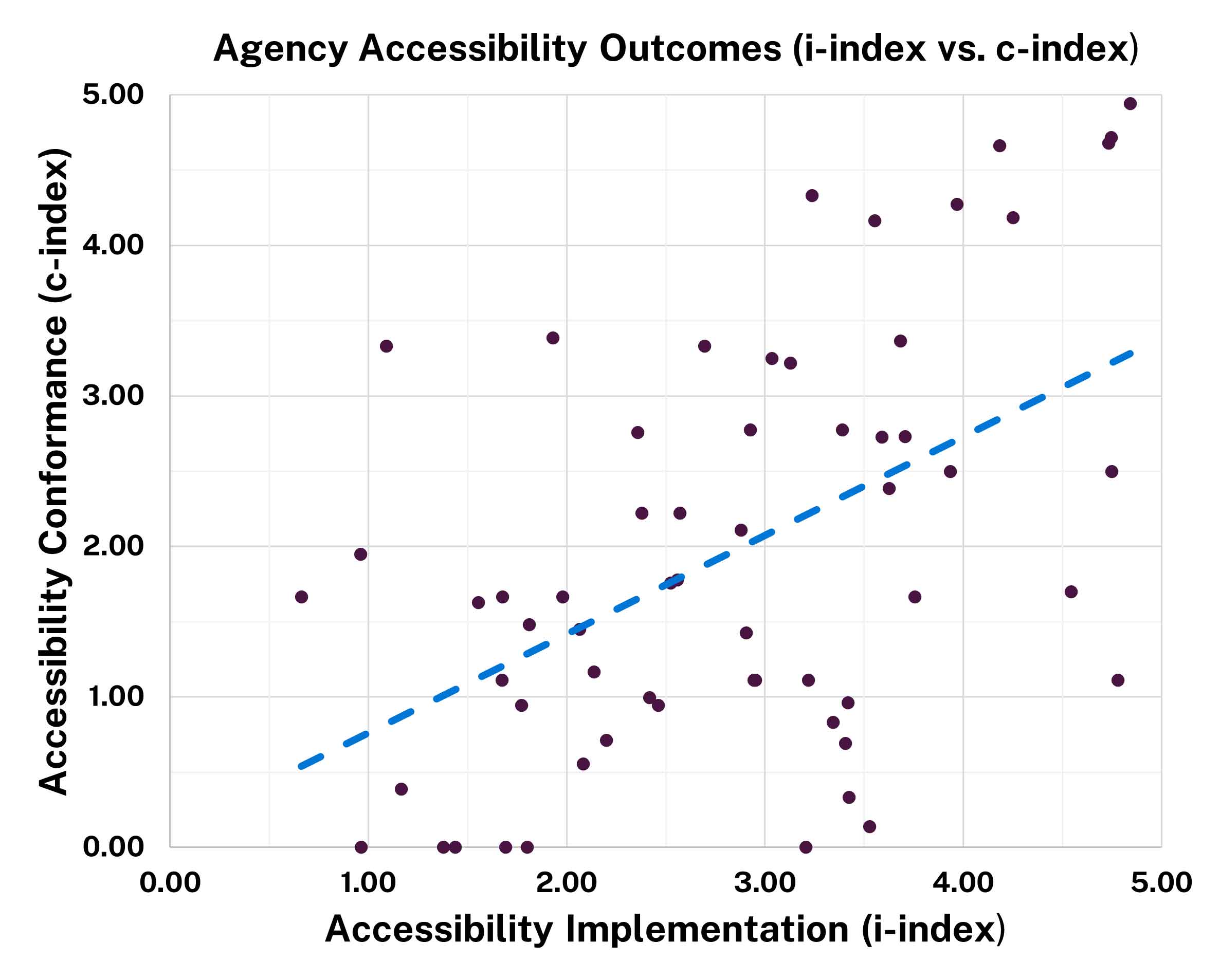

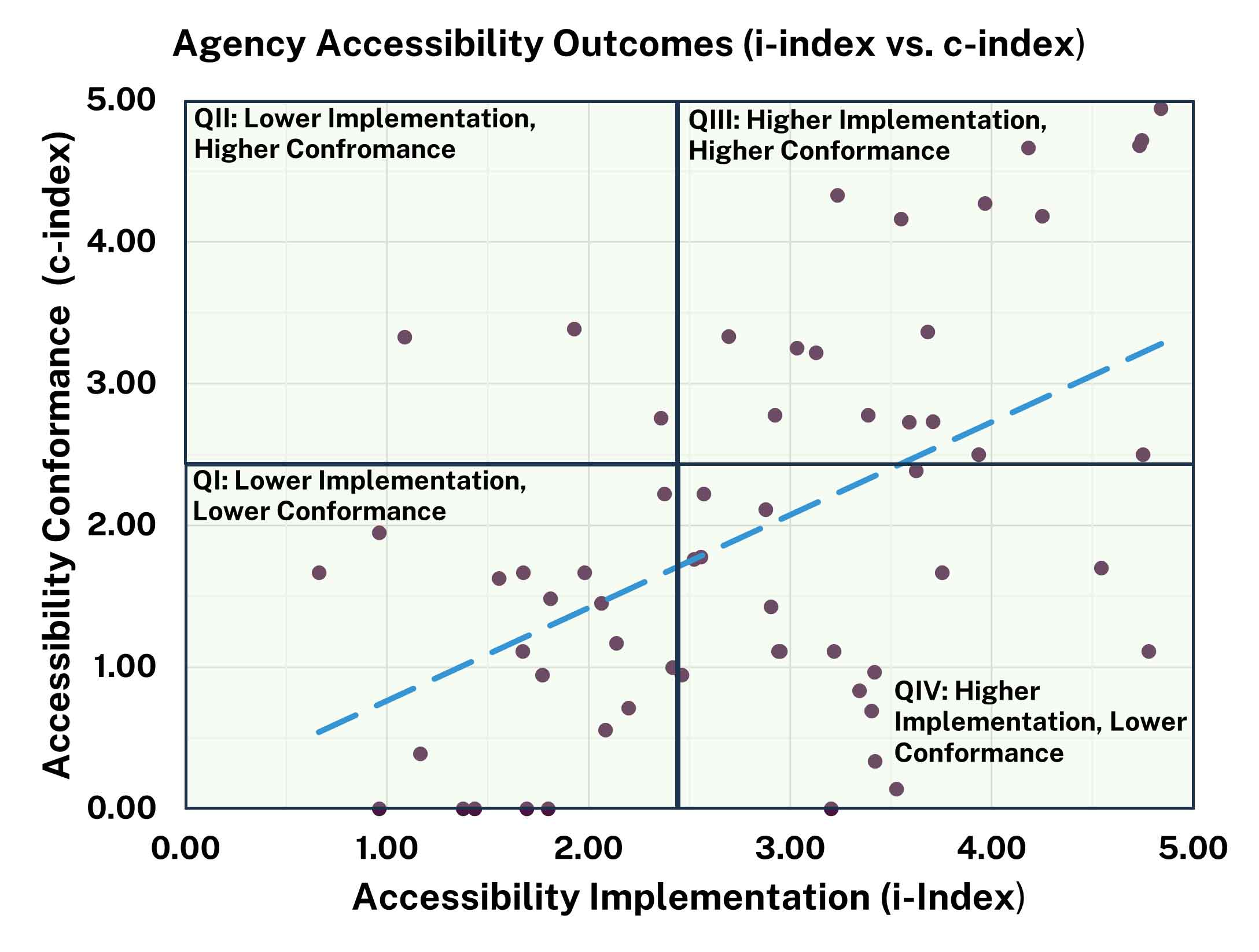

GSA combined the outcomes for three accessibility factors—Policy Integration, Acquisition and Procurement, and Testing and Remediation—into one summary evaluation index: the Accessibility Implementation (i-index). We use this summary index to compare the administration, policy integration and execution of ICT accessibility across the enterprise with the agencies’ overall Accessibility Conformance (c-index). Figure 1 shows the outcomes for each of these indices by agency:

Figure 1 shows a wide range of outcomes for both Accessibility Implementation and Accessibility Conformance. Implementation outcomes ranged from 0.66 to 4.84, while conformance ranged from 0 to 4.94. The graph shows a positive relationship between implementation and conformance, as demonstrated by the dashed line. Agencies that invest and implement repeatable accessibility processes across the enterprise are more likely to have positive outcomes with respect to accessibility conformance. In general, the better an agency integrates accessibility best practices across the enterprise, the better conformance outcomes they tend to have. The presence of a notable group of agencies where higher implementation levels do not correspond with higher conformance outcomes suggests potential challenges related to the quality of accessibility practices or gaps between implementation activities and measurable results. Some agencies achieve moderate conformance despite limited enterprise integration, suggesting localized or ad hoc success that may not scale or sustain without stronger governance.

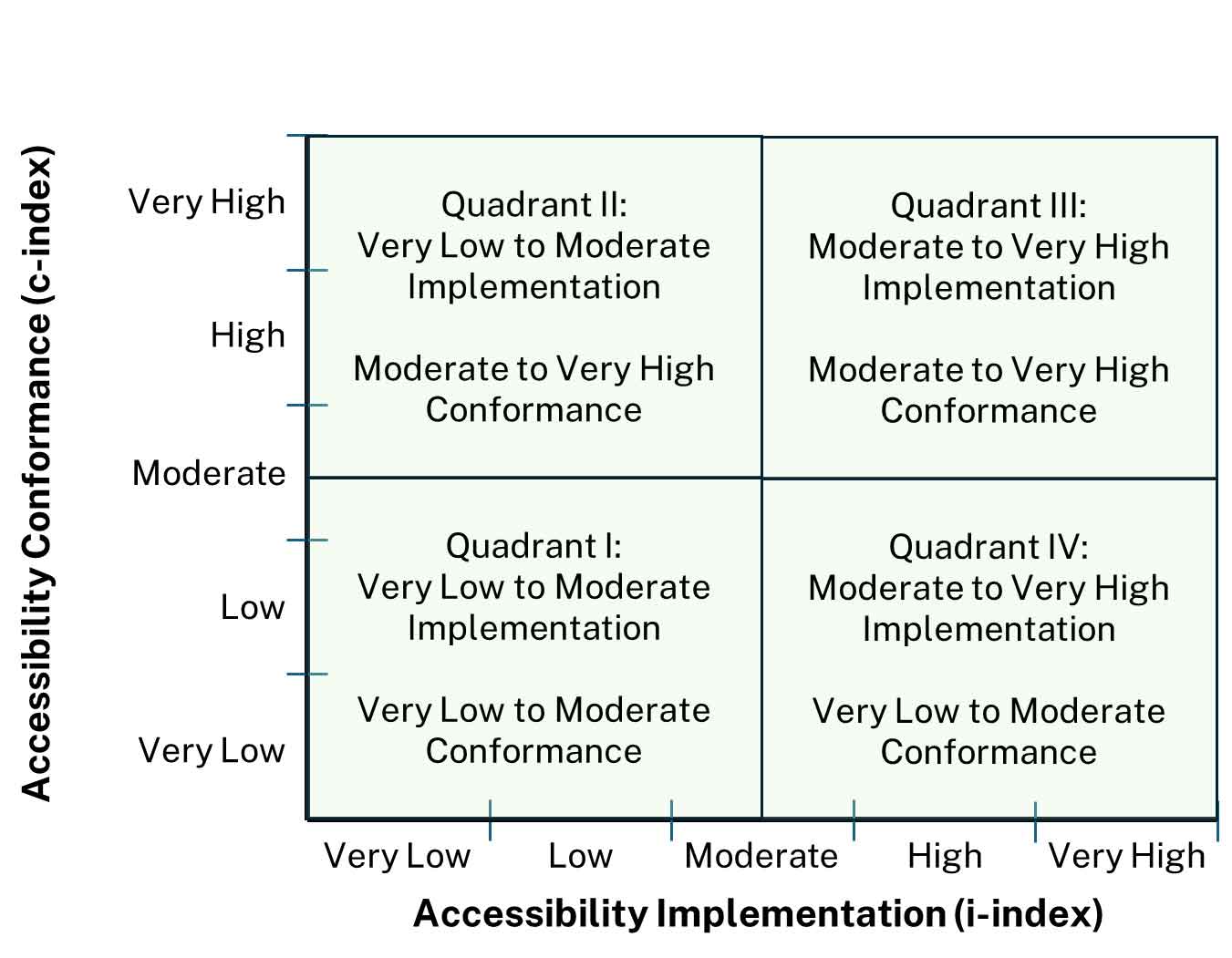

For more discrete analysis, we grouped agency accessibility outcomes into four quadrants as shown in Figure 2 with the boundaries ranging from 0 to 2.5 and >2.5 to 5.

| Quadrant | # of Agencies | Quadrant Recommendation |

|---|---|---|

| III: Higher Implementation, Higher Conformance | 16 | Continue investment and a focus on continuous process improvement activities to see incremental improvements in both inputs (integration, acquisitions, testing) and outputs (ICT conformance). |

| IV: Higher Implementation, Lower Conformance | 20 | Prioritize investment in the execution of testing processes and ways to implement established policy and standard operating procedures to increase conformance. |

| II: Lower Implementation, Higher Conformance, | 3 | Prioritize investment in the developing processes and developing policies that champion and institute ICT accessibility across the enterprise. |

| I: Lower Implementation, Lower Conformance | 21 | Focus on establishing baseline governance by assigning ownership, adopting core policies and procedures, and prioritizing testing and remediation for high-impact ICT, leveraging shared services and existing federal resources. |

Accessibility Conformance Outcomes

This section examines reported Section 508 conformance tested ICT outcomes for the most frequently used or viewed ICT. While conformance scores provide an important indicator of accessibility outcomes, they reflect only the subset of ICT that agencies tested and reported during the assessment period. As a result, these findings should be interpreted alongside testing coverage, tracking practices, and reporting changes introduced in FY 2025. Together, the results highlight not only where accessibility barriers persist, but also how differences in testing scope, data maturity, and governance influence reported conformance outcomes across the federal enterprise.

Interpreting Conformance Outcomes

Conformance outcomes reflect both accessibility performance and agencies’ testing and reporting practices. Agencies that test a broader and more representative portion of their ICT portfolios tend to report lower average conformance, while agencies that test a narrower subset of ICT often report higher conformance rates within that limited scope. As a result, higher reported conformance does not necessarily indicate stronger enterprise-wide accessibility, particularly when testing coverage is incomplete or uneven across ICT types.

Changes to FY 2025 reporting also affect year-over-year interpretation, specifically the respondent pool was smaller. These shifts influence aggregate conformance percentages and reduce comparability with earlier assessments. Accordingly, conformance results are more of a directional indicator of accessibility outcomes rather than a definitive measure of governmentwide compliance. Improving participation and response rates in future reporting cycles will be essential to producing more robust, stable, and comparable results year over year.

Key Takeaways

Assessment

The FY 2025 assessment asked agencies about the testing and conformance of ICT including:

GSA analyzed data from 60 agencies to determine the level of Section 508 conformance. Components did not submit accessibility conformance information independently. Submissions from parent agencies should include accessibility conformance data for their respective components.

Conformance Outcomes Versus Agency Size

Conformance outcomes revealed that an agency's size was not a determining factor for overall conformance levels. Agencies of various sizes were distributed across all five performance outcome categories (Very Low to Very High) (see Table 4).

The assessment revealed varied agency outcomes regarding ICT conformance. While some agencies reported a lack of ICT testing altogether, others noted testing but no fully conformant ICT. Additionally, a third group of agencies demonstrated comprehensive testing and reported fully conformant ICT.

| Very Low Conformance | Low Conformance | Moderate Conformance | High Conformance | Very High Conformance | |

|---|---|---|---|---|---|

| Very Large (≥75,000 employees) | 2 | 4 | 1 | 0 | 1 |

| Large (10,000-74,999 employees) | 3 | 2 | 3 | 1 | 1 |

| Medium (1,000-9,999 employees) | 7 | 2 | 1 | 2 | 2 |

| Small (100-999 employees) | 2 | 5 | 3 | 0 | 1 |

| Very Small (<100 employees) | 3 | 5 | 3 | 3 | 3 |

Findings for Conformance of Tested ICT

The assessment asked agencies to report on the accessibility testing outcomes for ICT evaluated during routine business operations over the past year. Agencies reported on public-facing and intranet web pages, hardware, software, and public-facing electronic documents. The assessment survey asked agencies to report the total number of items they owned and operated in each category, how many were tested for Section 508 conformance, and how many fully conformed.

Key data points from 60 agencies include:

On average, 50 percent of agencies lacked a mechanism to track ICT accessibility conformance testing and results for ICT. This includes:

Over one-third of the 60 agencies could not estimate the number of ICT they own or operate for at least one ICT type. This includes:

Analysis reveals varying levels of conformance and varying amounts of ICT tested. Approximately half of agencies reported testing their ICT within the last year; the other half did not provide data. Agencies reported varying levels of full conformance across ICT categories of the ICT tested:

Agencies should monitor and provide more data to achieve a complete understanding of governmentwide ICT conformance with Section 508. This is especially important for agencies that do not currently monitor or quantify the ICT they own, operate, or test. There is a clear opportunity to improve the scope and coverage of ICT testing.

| ICT Type | Number of agencies that submitted data (out of 60)1 | Total owned or operated by agencies (estimated) | Percentage of total tested | Percentage tested that fully conform |

|---|---|---|---|---|

| Public-Facing Web Pages | 41 | 1,675,226 | 37% | 72% |

| Intranet Web pages | 30 | 4,701 | 53% | 65% |

| Electronic Documents | 38 | 4,602,276 | 25% | 38% |

| Hardware | 24 | 1,762,155 | 4% | 83% |

| Software | 27 | 47,104 | 5% | 47% |

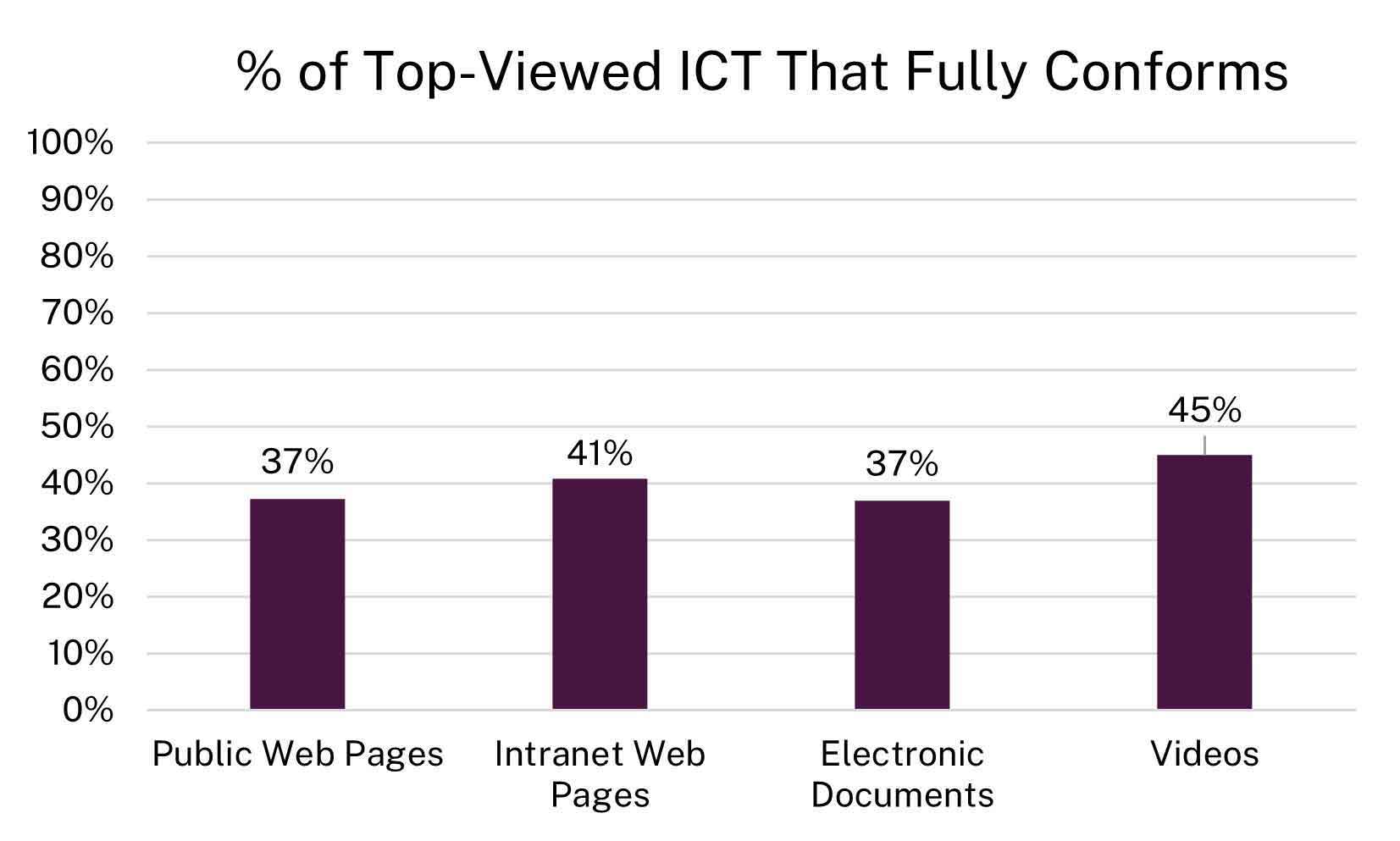

Findings for Conformance of Top-Viewed ICT

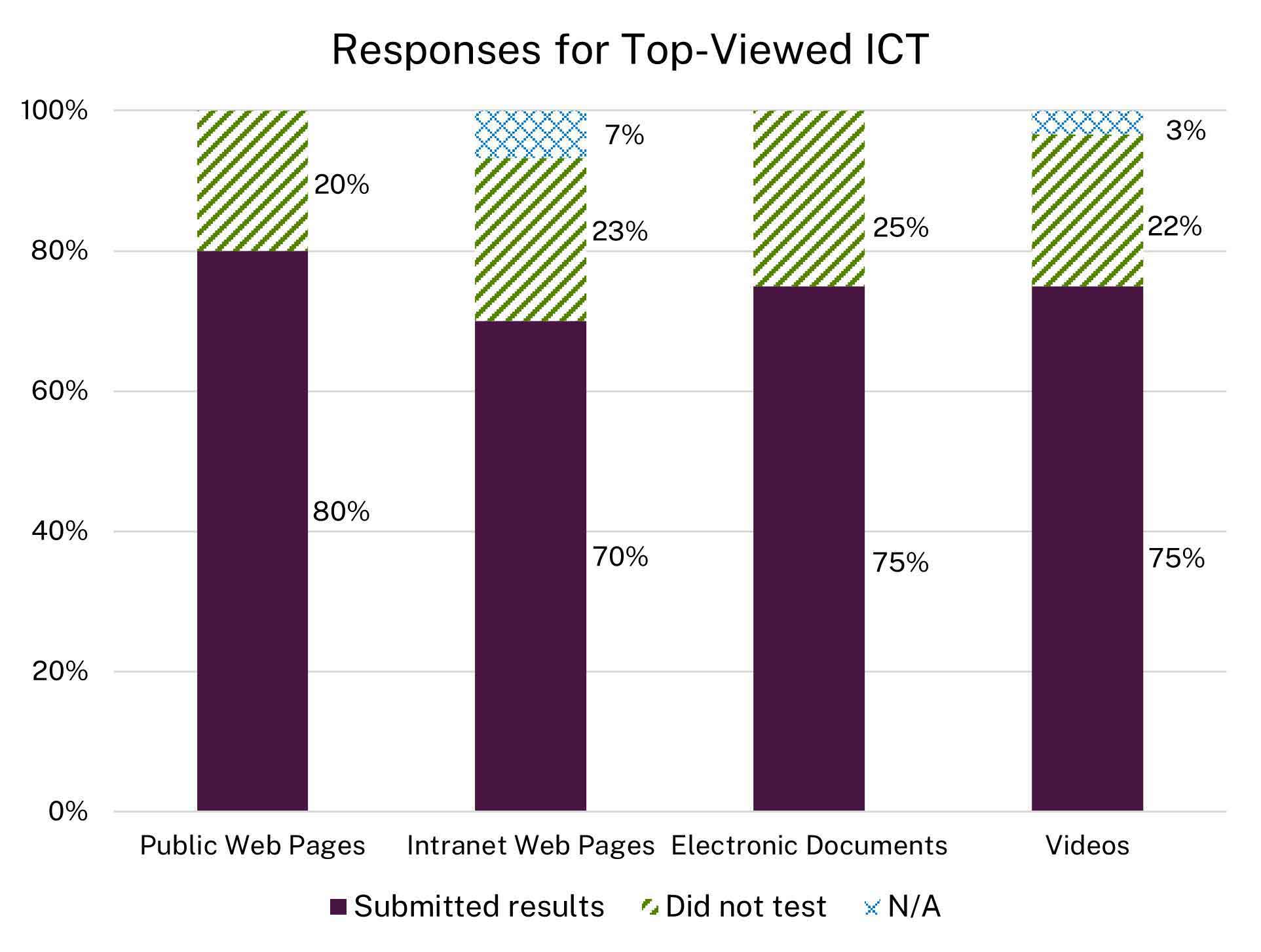

As Figure 4 shows, on average, 23 percent of agencies did not test at least one of their top-viewed ICTs. This year's submission included less top-viewed ICT data because components did not submit their own data; instead, it was included in their parent agencies’ submissions. The top-viewed submissions only included the top five videos and top 10 web pages and electronic documents for the entire agency or parent agency. The submitted data indicates that agencies lack sufficient resources or capacity to conduct comprehensive testing of the ICT they procure, develop, maintain, or use.

The governmentwide Section 508 conformance overall for the top-viewed ICT is low, with less than half fully conformant to Section 508 standards. The reported top-viewed ICT outcomes shows:

-

Public Web Pages:

37% | Fully Conformant -

Intranet Web Pages:

41% | Fully Conformant -

Public Electronic Documents:

37% | Fully Conformant -

Public Videos:

45% | Fully Conformant

| Top-Viewed ICT by Type | Number of agencies that tested ICT (out of 60) | Number of agencies that did not test ICT (out of 60 agencies) | Percentage of fully conformant ICT governmentwide |

|---|---|---|---|

| Public Web Pages | 48 | 12 | 37% |

| Intranet Web Pages | 42 Four agencies noted they do not have an intranet. |

14 | 41% |

| Public Electronic Documents | 45 | 15 | 37% |

| Public Videos | 45 Two agencies noted they do not have any videos. |

13 | 45% |

Top Defects

Accessibility Implementation Outcomes

This section examines responses in three specific factors of Policy Integration, Acquisition and Procurement, and Testing and Remediation. Taken with Accessibility Conformance, responses suggest that governance and implementation effectiveness, not agency size, drive accessibility outcomes. Agencies that more effectively integrate Section 508 into policy, acquisition, and testing processes tend to achieve better ICT conformance, while fragmented implementation corresponds with lower ICT conformance. The results highlight not only where implementation barriers persist, but also how differences in acquisition processes, testing methods, and remediation techniques impact outcomes across the federal enterprise.

Interpreting Implementation Outcomes

Implementation outcomes reflect agency and component perspectives regarding how well agencies integrate accessibility practices or how frequently they perform specific actions related to key tasks. While all 60 agencies responded to each set of questions, the number of component responses varied by question set.

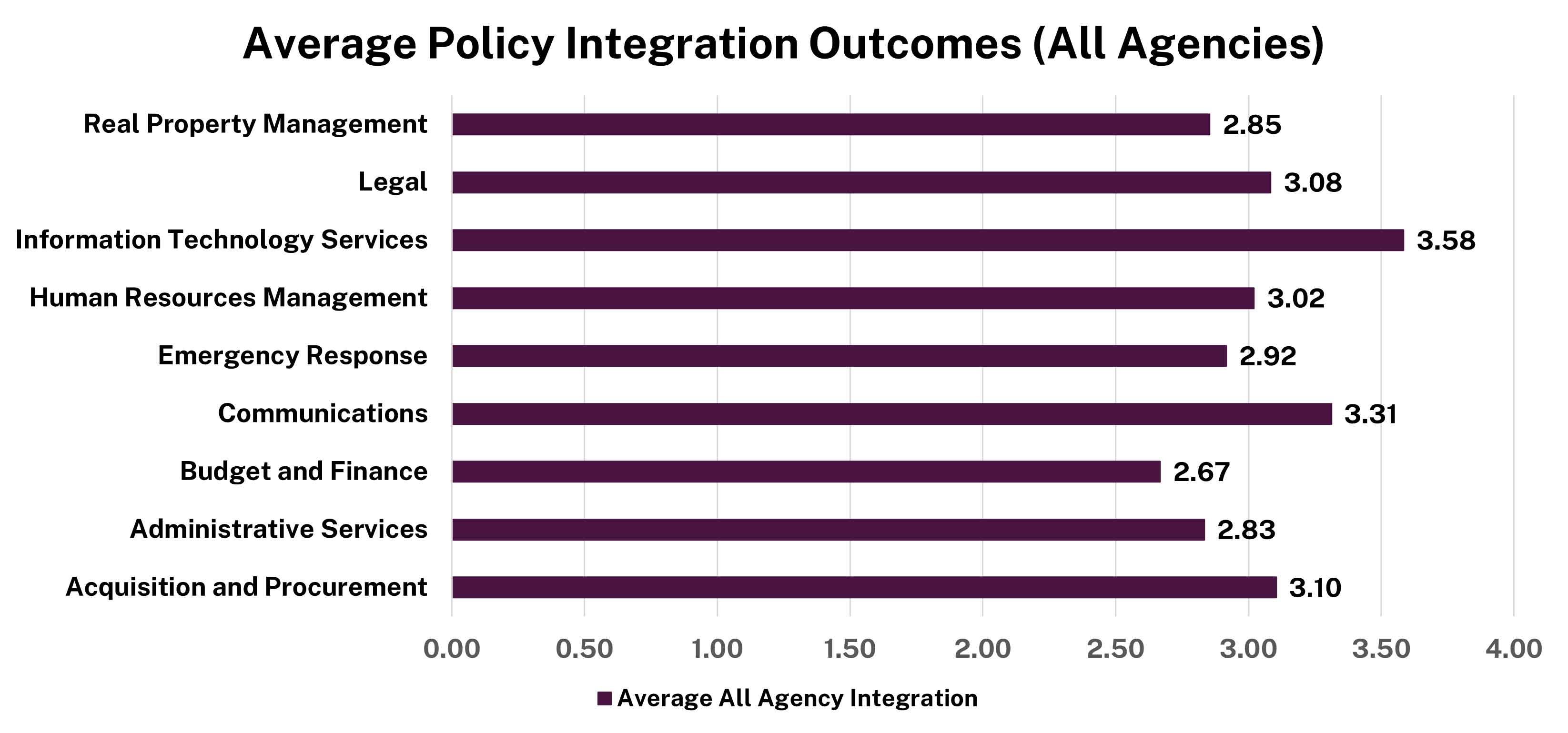

Policy Integration

Key Takeaways

Assessment

The FY 2025 assessment examined the extent to which ICT accessibility is integrated into nine core business functions across agencies and components.

All 212 respondents, 60 agencies and 152 components, provided responses.

Findings

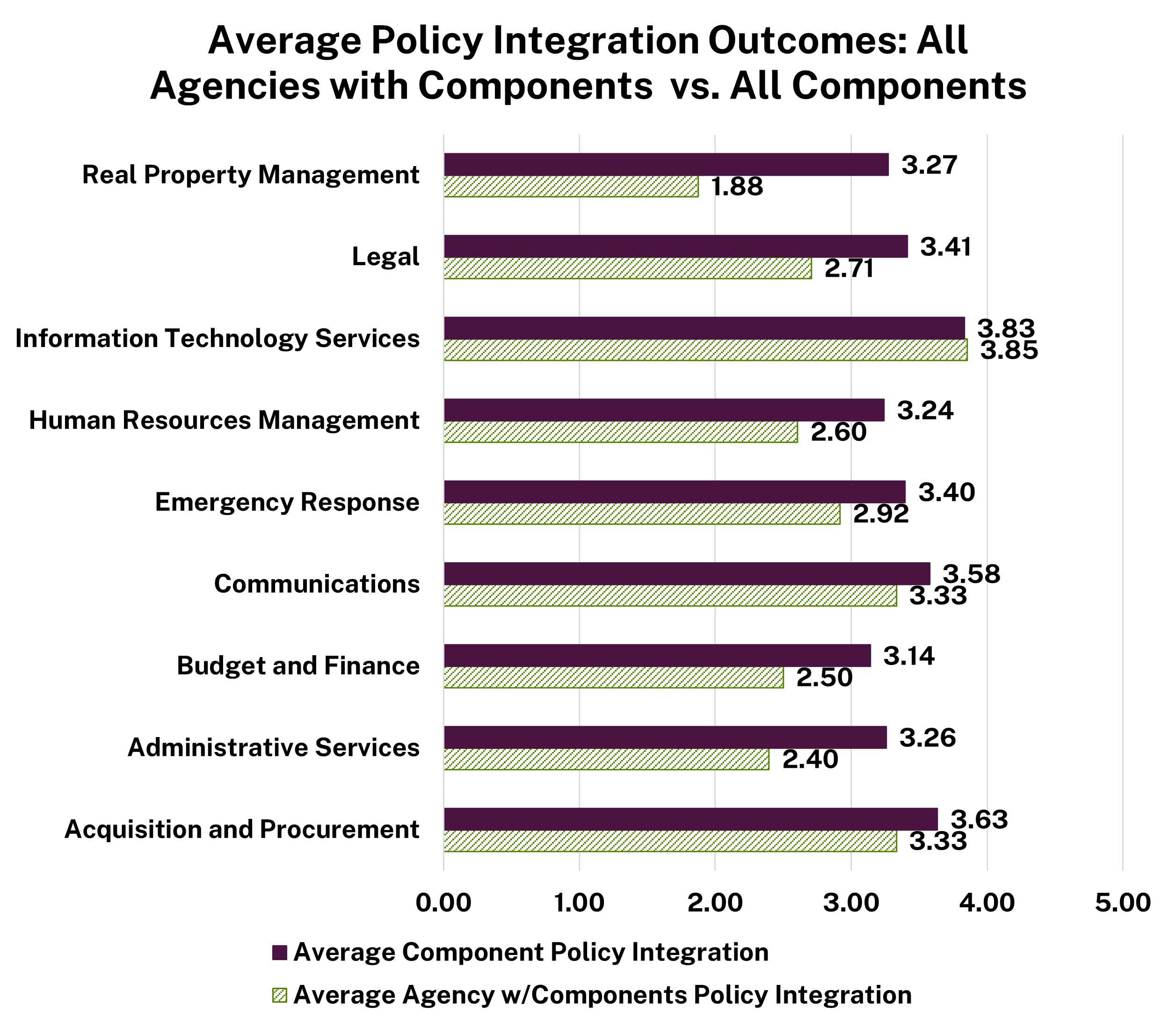

Integrating ICT accessibility into core agency and component functions supports more consistent Section 508 compliance. However, integration varied significantly by business functions (Figure 6).

Information Technology Services and Communications showed the highest levels of policy integration, while Emergency Response and Budget and Finance showed the lowest.

Comparisons between parent agencies and their components show broadly similar patterns, with components reporting slightly higher integration across most business functions (Figure 7).

The largest gaps between agency-level and component-level accessibility integration appear in Budget and Finance and Acquisition and Procurement, where components report stronger integration than parent agencies. This pattern suggests that accessibility integration often occurs where operational control over funding and purchasing decisions is strongest, while departmentwide governance and standardization remain underdeveloped. Stronger integration into enterprise-level acquisition and budget policies could improve consistency, reduce downstream remediation, and lower long-term costs.

Human Resources Management and IT Services showed the smallest agency-component gaps, suggesting more consistent integration, likely supported by enterprise-wide systems, shared services, or centralized policies.

Best Practices and Remaining Challenges

During the past year, only two agencies reported taking deliberate steps to review and update policies to better integrate Section 508 requirements across core business functions. These efforts required coordination with program offices, clarifying accessibility expectations, and strengthening collaboration across functional areas. Significant challenges remain due to fragmented policy structures and limited integration of Section 508 requirements into operational policies. Although many agencies maintain standalone Section 508 policies, related policies governing acquisition, IT, communications, and other core functions often do not fully integrate accessibility requirements. This fragmentation weakens enforcement, contributes to inconsistent implementation, and increases the risk of developing or procuring inaccessible ICT.

Acquisition and Procurement

Key Takeaways

Assessment

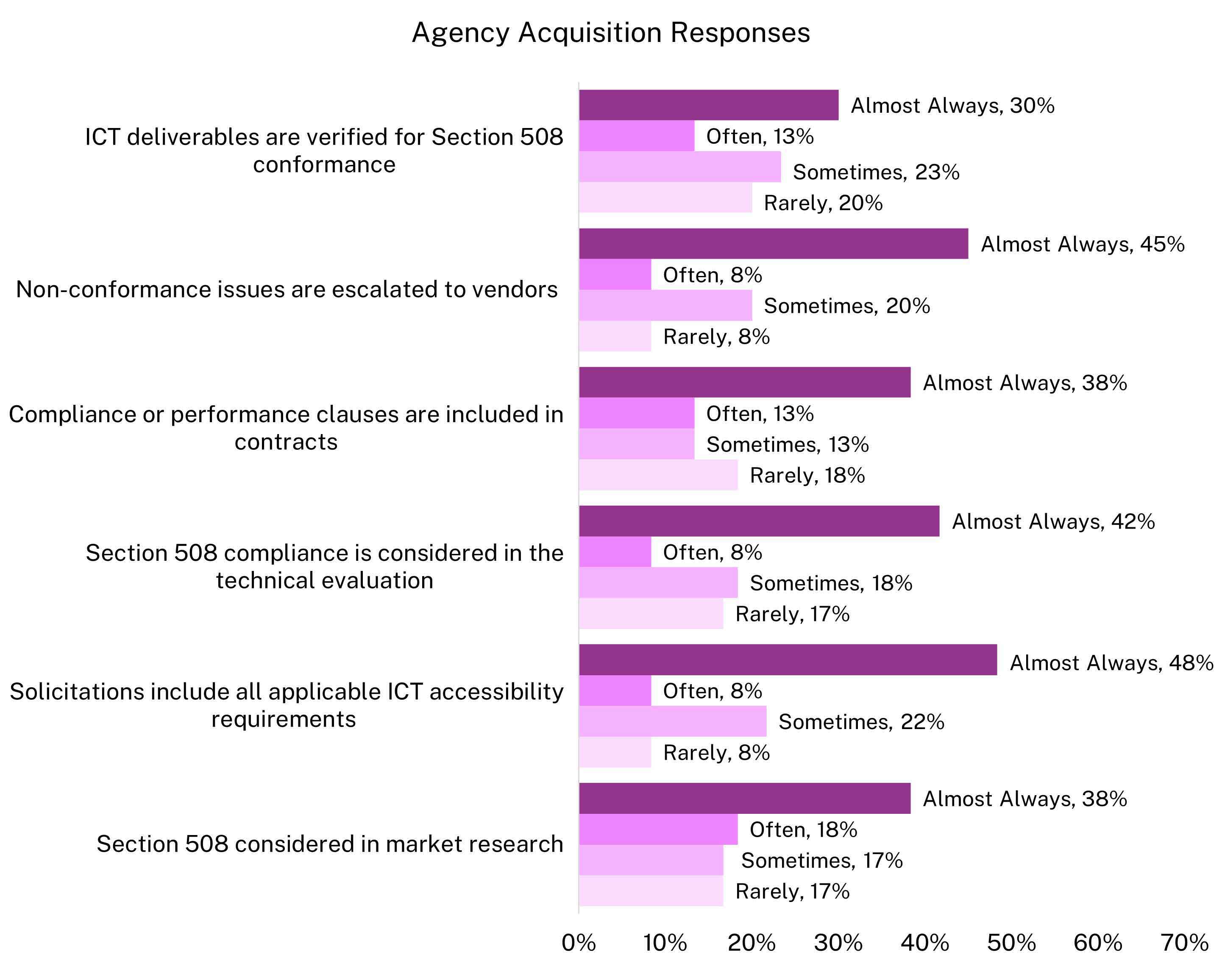

Assessment questions focused on pre-award activities (market research, solicitations, proposal evaluation) and post-award activities (contract clauses, defect escalation, and verification of deliverables).

Components answered these questions only if they performed acquisition and procurement activities independently or in addition to their parent agency. Sixty agencies and 110 components provided responses.

Findings

To reduce accessibility issues during or after implementation, agencies must integrate Section 508 requirements throughout the procurement lifecycle and carry them through delivery, testing and acceptance. This helps ensure vendors and procurement officials remain accountable for accessibility throughout the ICT lifecycle. When agencies do not integrate Section 508 into procurement, they face predictable risks, including:

The average agency Acquisition and Procurement factor outcome was 3.44 (High) on a 5-point scale, with outcomes ranging from 0 to 5.

Components from 10 agencies reported similar results. Of the 110 components that perform acquisition and procurement activities independently or in addition to the Agency, the average component outcome was 3.72 (High), ranging from 0.42 to 5. Their 10 parent agencies reported an average outcome of 4.02 (Very High).

Among the agencies that perform acquisition activities, 31 (56 percent) reported mostly or fully integrating ICT accessibility into acquisition and procurement policies and functions.

Response data show a consistent pattern: agencies more often include Section 508 requirements than verify and enforce them. This gap between requirements-setting and follow-through limits vendor accountability and increases the likelihood that nonconformant ICT is accepted. Additional context:

| Frequency | Section 508 compliance is considered in market research | ICT solicitations include all applicable ICT accessibility requirements | Section 508 compliance is considered in the technical evaluation of ICT | Compliance or performance clauses are included in contracts to ensure vendor accountability | Nonconformance issues are escalated to vendors or contractors when found | ICT deliverables from a contract are verified for Section 508 conformance |

|---|---|---|---|---|---|---|

| Never (0%) | 3% | 7% | 8% | 10% | 12% | 7% |

| Rarely (1%-10%) | 17% | 8% | 17% | 18% | 8% | 20% |

| Sometimes (11%-50%) | 17% | 22% | 18% | 13% | 20% | 23% |

| Often (50%-90%) | 18% | 8% | 8% | 13% | 8% | 13% |

| Almost always (≥90%) | 38% | 48% | 42% | 38% | 45% | 30% |

| N/A – does not perform activity | 7% | 7% | 7% | 7% | 7% | 7% |

Overall, component-level acquisition practices mirror parent agencies’: components more consistently set requirements than verify and enforce them (Table 8).

On average, 62 percent of components reported “often” or “almost always” performing each assessed activity, comparable to 65 percent for parent agencies. Components demonstrated stronger performance in including accessibility requirements in solicitations: 74 percent reporting “often” or “almost always” doing so, compared to 60 percent of parent agencies.

Components and parent agencies reported similar outcomes in technical evaluations: 62 percent of components and 60 percent of parent agencies reported “often” or “almost always” considering Section 508 prior to award. Components lagged behind parent agencies in market research: 61 percent of components report they “often” or "almost always” consider Section 508 compared to 70 percent of parent agencies.

Both groups reported comparable performance in verification of deliverables: 56 percent of components and 60 percent of parent agencies reported “often” or “almost always” verifying deliverables. However, many components selected “sometimes” or “rarely” across multiple activities, indicating inconsistent application of accessibility requirements, an inconsistency also reflected in agency-level responses.

Components continue to struggle with contract management and enforcement. Only 21 percent of components reported “often” escalating nonconformance issues to vendors. **Parent agencies reported stronger escalation practices**, with 50 percent indicating they “almost always” escalate accessibility issues and 20 percent reporting they “often” do.

Taken together, components frequently include accessibility requirements and participate in key acquisition steps, but inconsistent verification and enforcement continue to limit effective Section 508 implementation across the acquisition lifecycle.

| Frequency | Section 508 compliance is considered in market research | ICT solicitations include all applicable ICT accessibility requirements | Section 508 compliance is considered in the technical evaluation of ICT | Compliance or performance clauses are included in contracts to ensure vendor accountability | Nonconformance issues are escalated to vendors or contractors when found | ICT deliverables from a contract are verified for Section 508 conformance |

|---|---|---|---|---|---|---|

| Never (0%) | 1% | 0% | 3% | 4% | 5% | 6% |

| Rarely (1%-10%) | 10% | 6% | 11% | 11% | 9% | 11% |

| Sometimes (11%-50%) | 23% | 13% | 17% | 15% | 20% | 22% |

| Often (50%-90%) | 27% | 26% | 23% | 25% | 21% | 27% |

| Almost always (≥90%) | 34% | 47% | 39% | 37% | 39% | 29% |

| N/A – does not perform activity | 5% | 7% | 7% | 9% | 6% | 5% |

Agencies that more fully integrate ICT accessibility into acquisition and procurement policies and functions report higher acquisition factor outcomes than agencies with less integration. Agencies reporting full integration averaged 4.74 (Very High), compared to 1.63 (Low) for agencies reporting no integration.

Acquisition outcomes do not correlate meaningfully with conformance results of tested ICT (c-index) or Testing and Remediation outcome, indicating that stronger acquisition alone does not ensure accessible outcomes without validation and follow-through.

Components show a similar pattern: stronger integration is generally associated with better acquisition outcomes. However, a notable exception exists: the moderately integrated group averaged 2.87 (Moderate), lower than the somewhat integrated group. Components reporting full integration averaged 4.36 (Very High), compared to 2.76 (Moderate) for components that reported no integration of accessibility into acquisition policies and functions. Components’ Testing and Remediation outcomes do not correlate strongly with their Acquisition and Procurement outcomes.

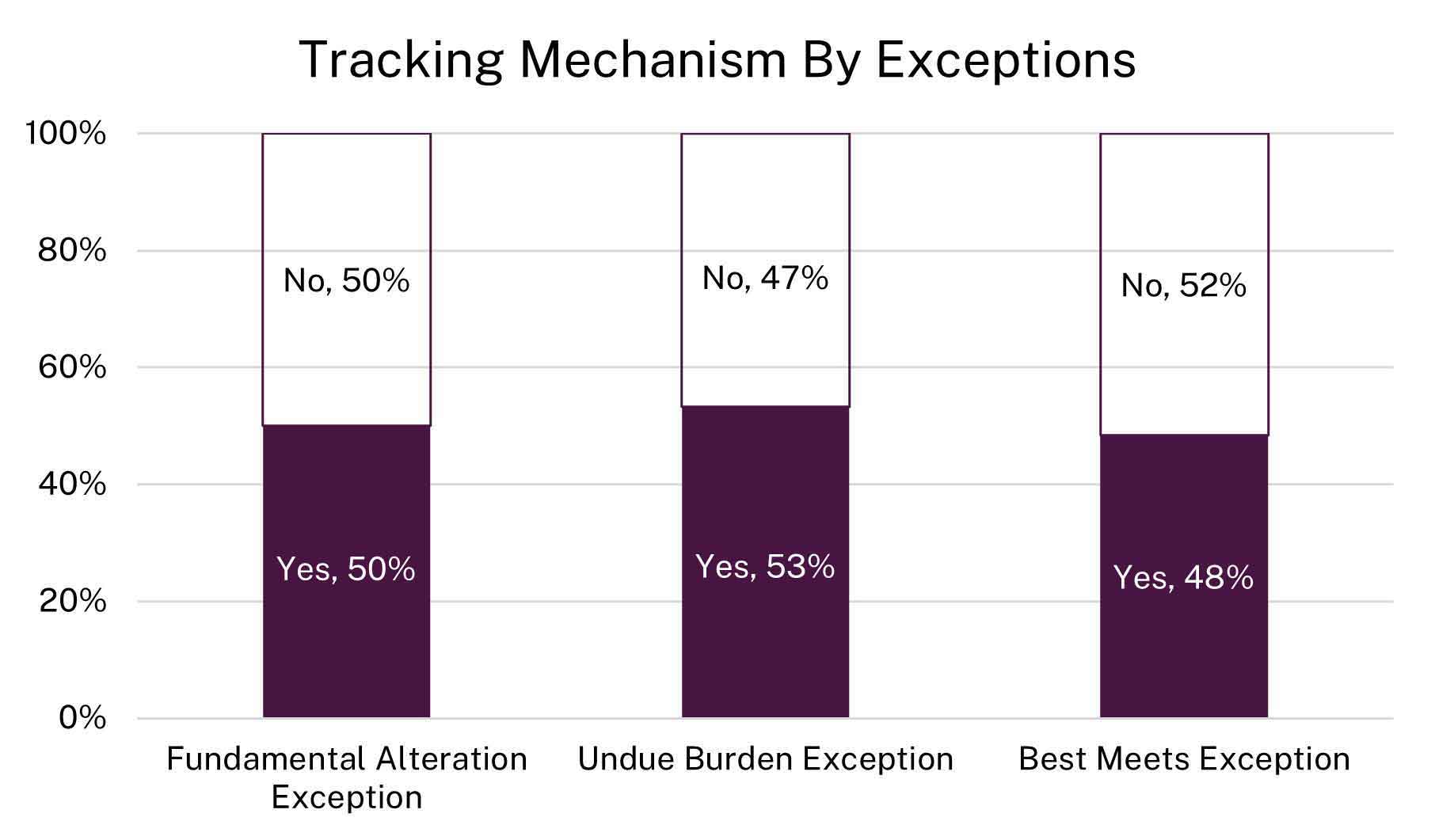

Exceptions

The assessment asked agencies and components whether they track “Fundamental Alteration,” “Undue Burden,” and “Best Meets” exceptions, how many are currently authorized, and whether they create and maintain required alternative means plans.

Responses reveal a significant governmentwide gap. Approximately half of agencies and more than half of components reported no tracking process for these exceptions (Figure 9). Compounding this gap, approximately 67 percent of agencies and more than 70 percent of components reported that they do not create or maintain alternative means plans.

Agencies and components reported totals for currently authorized exceptions. Best Meets accounts for the highest volume of these authorized exceptions.

Total Authorized Fundamental Alteration Exceptions

Total Authorized Undue Burden Exceptions

Total Authorized Best Meets Exceptions

Best Practices and Remaining Challenges

Over the past year, some agencies strengthened the integration of Section 508 requirements into acquisition processes by updating contract language, improving exception and exemption documentation, and formalizing request evaluation procedures. Agencies that integrated accessibility reviews into Federal Information Technology Acquisition Reform Act (FITARA) processes, authorization to operate (ATO) workflows, and software request procedures gained significantly improved early visibility into accessibility risks. Centralizing IT acquisition reviews, automating Section 508 checklists, and strengthening market research using accessibility conformance reports (ACRs) supported more consistent oversight. These practices strengthen day-one accessibility expectations, help identify non-compliant solutions earlier, and support more effective vendor engagement.

However, substantial challenges remain. Agencies reported inconsistent adoption of Section 508 contract language and uneven leadership support for enforcement. Vendor accessibility claims remain difficult to validate, and limited shared guidance and tools hinder more uniform implementation. Many digital services and IT systems still enter production without required accessibility testing, increasing remediation costs and compliance risks. Limited capacity, growing review demands, and tooling needs continue to strain acquisition and compliance teams. Strengthening accountability, standardizing post-award controls, and improving vendor oversight are essential next steps to ensure accessible technology acquisitions.

Testing and Remediation

Key Takeaways

Assessment

The assessment examined how agencies test ICT for Section 508 conformance and remediate accessibility across ICT types, including hardware, software, public electronic documents, public web pages, and internal web pages. Specifically, the assessment addressed:

Components answered these questions only if they performed Section 508 testing independently or in addition to their parent agency. Sixty agencies and 106 components provided responses.

Findings

Section 508 testing and remediation are critical to ensuring federal digital products and services are accessible to all Americans. Without systematic testing, agencies cannot identify accessibility defects, and without remediation those defects persist. Sustained testing and remediation support compliance with federal law, reduce rework and cost, and enable equitable access to digital information and services.

Agencies reported an average Testing and Remediation factor outcome of 2.00 (Low) on a 5-point scale, with results ranging from 0 to 4.73. Among the 106 components from 10 agencies that perform Section 508 testing independently or in addition to the parent agency, the average outcome was 2.56 (Moderate) on a 5-point scale with results ranging from 0 to 5. The corresponding parent agencies reported a slightly higher Moderate average of 2.98.

Testing and remediation is the weakest area of Section 508 implementation across the federal enterprise and continues to constrain overall accessibility outcomes. While agencies that apply more systematic testing and remediation practices tend to achieve higher conformance for the ICT they test, most agencies do not test consistently across ICT types or stages of the lifecycle. Gaps are most pronounced for hardware and internal web content, and user-centered practices such as usability testing with people with disabilities are uncommon. Where agencies establish clearer remediation expectations, such as defined timelines or standardized processes, outcomes improve, but these practices are not widely adopted. The findings that follow illustrate how uneven testing coverage and limited remediation maturity continue to limit progress toward sustained, governmentwide Section 508 conformance.

Governance, Risk, and Compliance

Only 30% of agencies and 33% of components reported using a GRC tool to manage Section 508 compliance, though some components used GRC tools even when their parent agency did not. Agencies and components using GRC tools achieved significantly higher Testing and Remediation and overall Conformance outcomes than those without GRC tools.Hardware and Software Accessibility Evidence Tracking

Only 30% of agencies systematically track Section 508 conformance evidence for hardware and 40% for software, with most agencies collecting evidence on an ad hoc or incomplete basis rather than through formal processes. Although agencies reported higher average evidence coverage for software (54%) than hardware (43%), wide ranges (0–100%) indicate inconsistent practices and uneven maturity, likely reflecting more established procurement and testing practices for software and greater familiarity with software accessibility risks compared to hardware.Testing and Remediation Outcomes

There is a moderate positive correlation between Testing and Remediation outcomes and conformance outcomes, indicating that agencies with stronger testing and remediation practices achieved higher conformance for tested ICT.

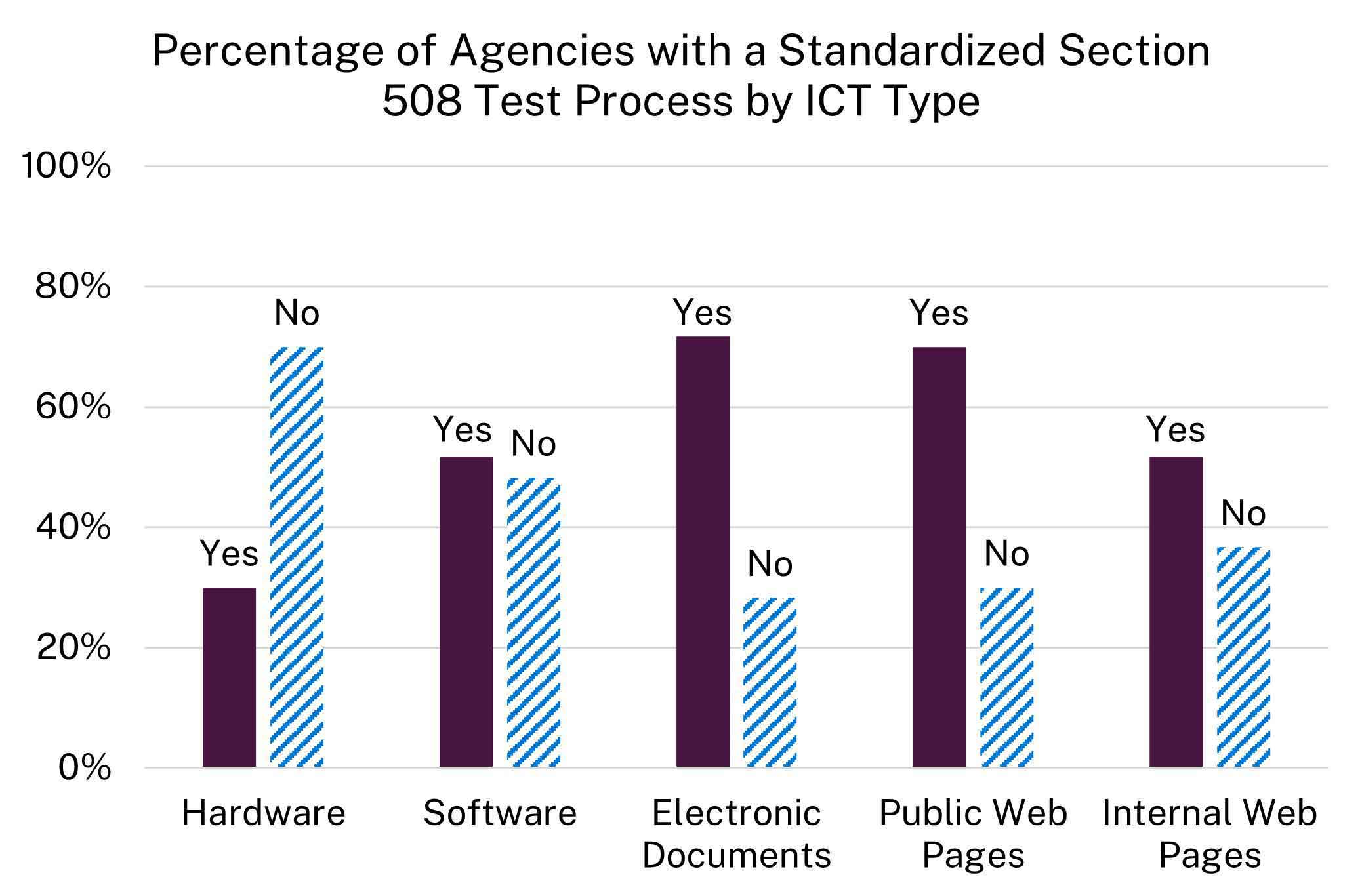

Agencies do not consistently adopt standardized Section 508 testing practices across ICT types. While more than 70 percent of agencies reported standardized testing for electronic documents and public web pages, only about 50 percent reported standardized processes for software and internal web pages, and only 30 percent reported a standardized process for hardware.

As shown in Figure 10:

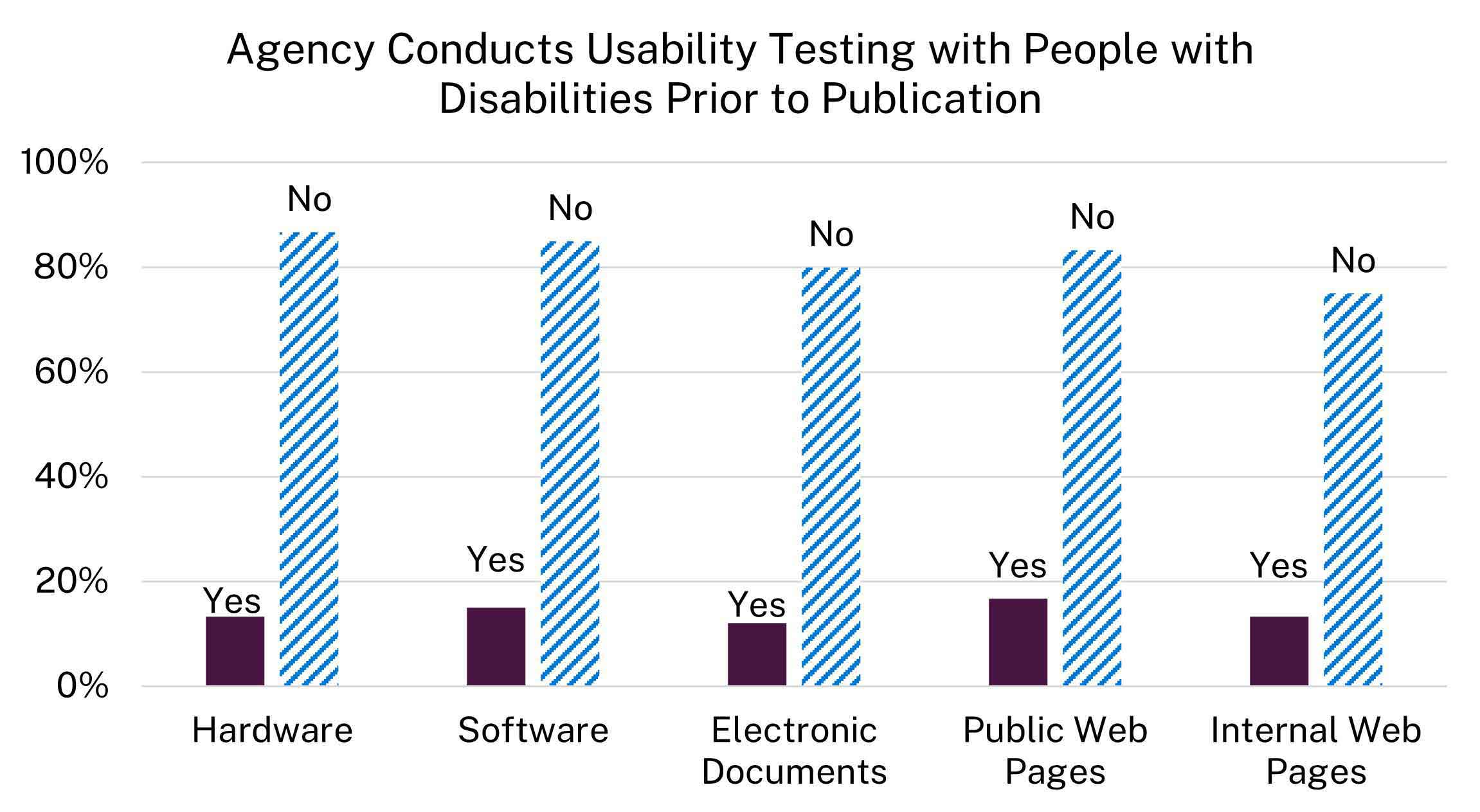

Usability testing with people with disabilities is rare across all ICT types. Only between 12 percent and 17 percent of agencies reported conducting usability testing with users with disabilities prior to deployment, depending on ICT type. As a result, most agencies deploy ICT without validating real-world accessibility.

Agencies also show limited user-centered accessibility verification beyond formal testing. Only 28 percent of agencies reported a process for consulting with individuals with disabilities or disability organizations, while most do not. Components reported higher consultation rates, but of the 106 components that perform any Section 508 testing, nearly half still lack such a process.

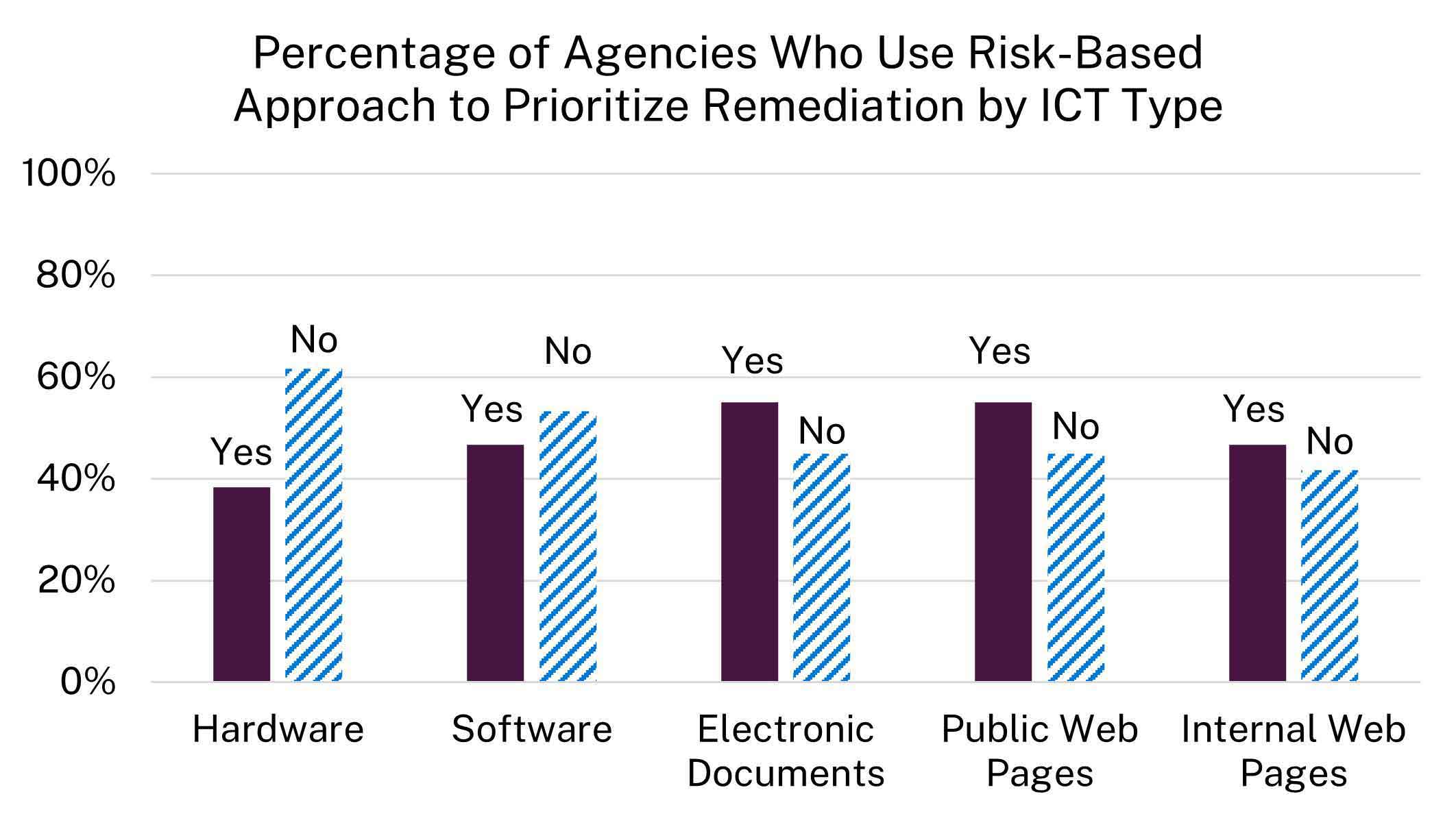

Agencies apply risk-based approaches unevenly, with hardware and internal web pages showing the weakest adoption. Many agencies either prioritize remediation without a formal, documented framework or fail to prioritize it altogether. Figure 12 shows:

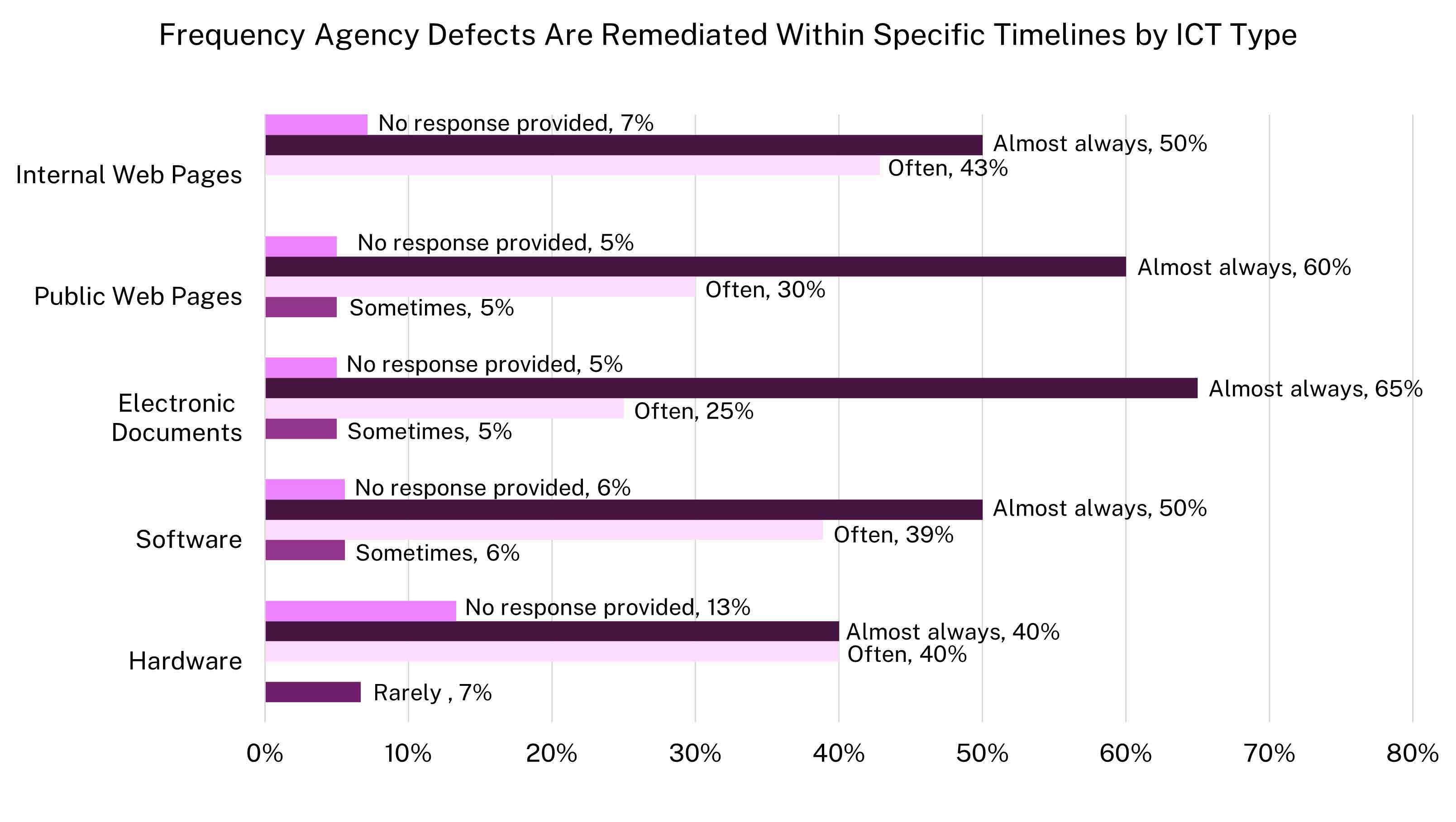

Most agencies do not require remediation timelines; however, agencies that establish timelines generally meet them, demonstrating that clear remediation expectations significantly improve accessibility outcomes. Approximately 70 percent of agencies reported no required timelines across ICT types. Where timelines exist, 80 percent to 90 percent of agencies reported remediating within those timelines.

Agencies most consistently apply manual Section 508 testing to public-facing web pages, where testing “often” or “almost always” is most common, reflecting prioritization of systems with high public visibility and compliance risk. In contrast, hardware receives the least manual testing attention, with a large share of agencies reporting they “never” or “rarely” test hardware prior to deployment, indicating that Section 508 conformance validation for hardware frequently does not occur. Manual testing of software, electronic documents, and internal web pages falls between these extremes, with substantial portions of agencies reporting only occasional or inconsistent testing. Overall, submitted data show that manual testing practices remain uneven across ICT types, with agencies prioritizing public-facing content while under-testing hardware and internal systems, limiting confidence in accessibility outcomes across the full ICT lifecycle.

Automated testing follows a similar pattern, with lower and inconsistent use on internal web content and electronic documents. These patterns indicate that many agencies lack standardized, enterprise-wide testing prior to deployment. Overall, testing and remediation practices remain uneven and underdeveloped, constraining improvements in Section 508 conformance across the federal ICT portfolio.

Best Practices and Remaining Challenges

Over the past year, several agencies strengthened their Section 508 programs by embedding accessibility more consistently into governance, development, and testing workflows. Agencies improved the efficiency and consistency of accessibility evaluations by automated and hybrid testing approaches and aligning defect tracking with enterprise inventory systems. Many agencies embedded accessibility earlier in the ICT lifecycle by integrating Section 508 requirements into software development processes, establishing standardized templates, and requiring accessibility review of documents and applications prior to release. Collaboration between accessibility teams and developers further strengthened lifecycle integration by embedding conformance checks into common tools and platforms.

Agencies also strengthened remediation and maintenance processes and expanded the evaluation of online training materials. Several agencies increased scalability through internal tools that support conformance reporting, tracking, and remediation. Training initiatives expanded, with some agencies training more than 1,000 personnel and participating in regular accessibility communities of practice.

Despite this progress, significant structural challenges continue to limit consistent Section 508 implementation. Limited staffing, constrained resources, and gaps in specialized expertise hinder agencies’ ability to sustain comprehensive testing, evidence tracking and remediation at scale. Agencies report that vendor-provided accessibility conformance reports remain inconsistent or unreliable, increasing the burden on agencies to independently validate conformance. Programs also report challenges integrating accessibility early in development and deploying automated testing tools within secure enterprise environments.

Agencies continue to face challenges maintaining enterprise-wide visibility into ICT assets, enforcing consistent practices across offices and components, and ensuring content owners understand and meet accessibility requirements.

Taken together, these findings show that while targeted investments and improved practices are yielding progress, sustainable Section 508 compliance will require stronger governance, earlier lifecycle integration, improved vendor accountability, and continued investment in workforce capacity and testing infrastructure.

Section 508 Management

Key Takeaways

Assessment

The FY 2025 assessment criteria included questions about agencies' Section 508 programs, such as:GSA collected data from 60 agencies; 152 components from 12 of those agencies show varied responses governmentwide.

Findings

A strong Section 508 program helps agencies meet statutory requirements and ensures that individuals with disabilities can access ICT products and digital services. Prioritizing ICT accessibility allows agencies to standardize development and procurement processes, reduce rework, improve efficiency, and keep projects on schedule. Clear Section 508 policies set expectations, support accountability, and contribute to a more effective procurement and development lifecycle.

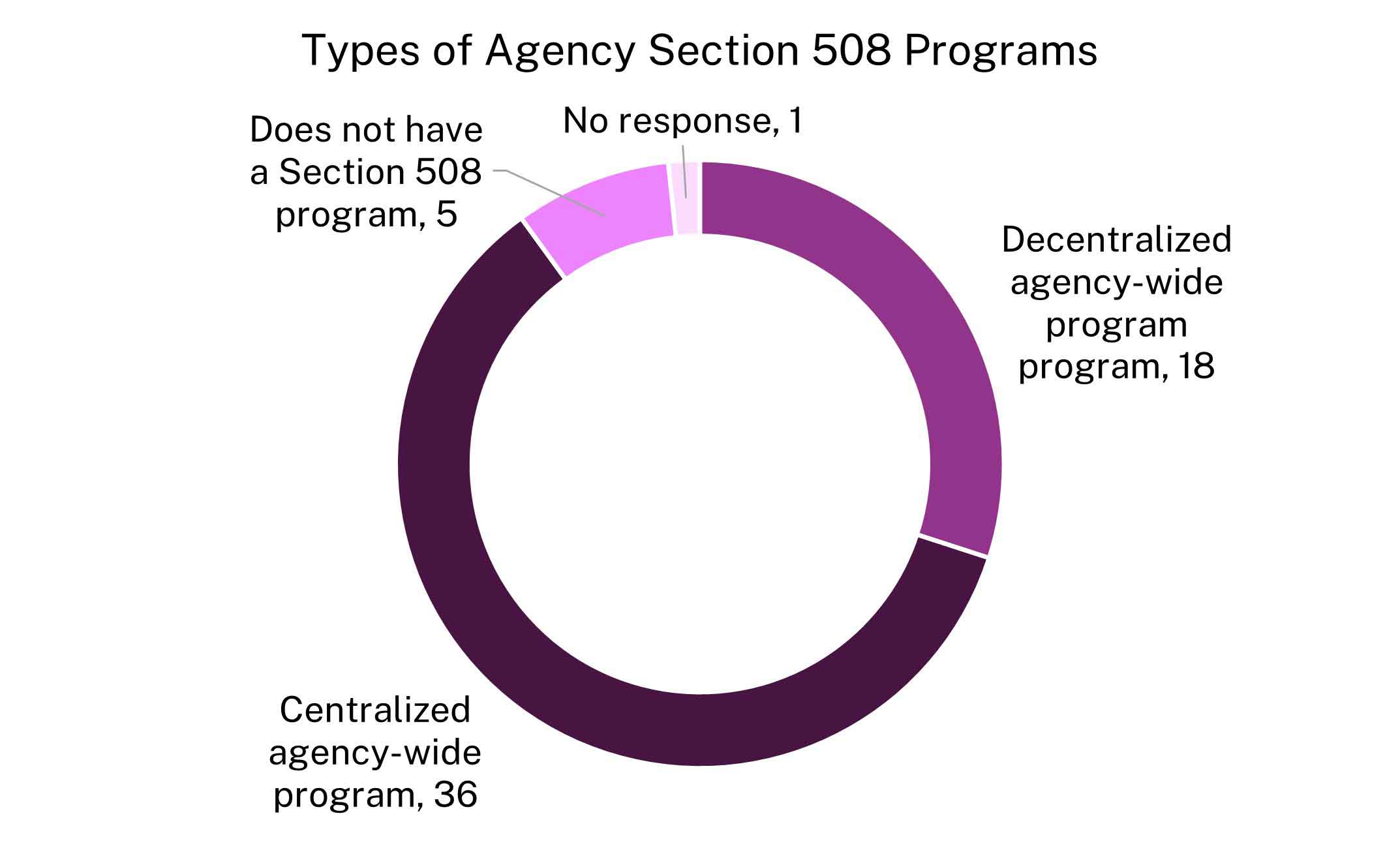

Most agencies report having a Section 508 program, but program structures vary and directly affect implementation and accountability. Of the 60 reporting agencies, 54 reported having a Section 508 program. Among these, 36 agencies operate centralized programs, while 18 operate decentralized programs. This structural variation shapes how accessibility responsibilities are managed and enforced across the enterprise.

Decentralized models rely heavily on component-level execution. Across 11 agencies, 115 components reported having their own Section 508 programs, indicating that much of the day-to-day accessibility work occurs at the component level rather than solely within an agency-wide program. As a result, the effectiveness of Section 508 implementation depends on how well these component programs are resourced, coordinated, and aligned with agency-wide policies and oversight mechanisms.

Strengthening governance frameworks to connect agency-wide leadership with component-level execution is critical to achieving consistent, scalable, and accountable ICT accessibility outcomes across the federal government. See Figure 14 for a breakdown of program structures across agencies.

Staffing

Both agencies and components provided data on Section 508 PM designation, time spent supporting Section 508 efforts, Section 508 staffing and contractor resources, and Section 508-related training. Responses varied across respondents in each category.

Responses from 60 agencies shows:

Responses from 152 components from 12 parent agencies shows:

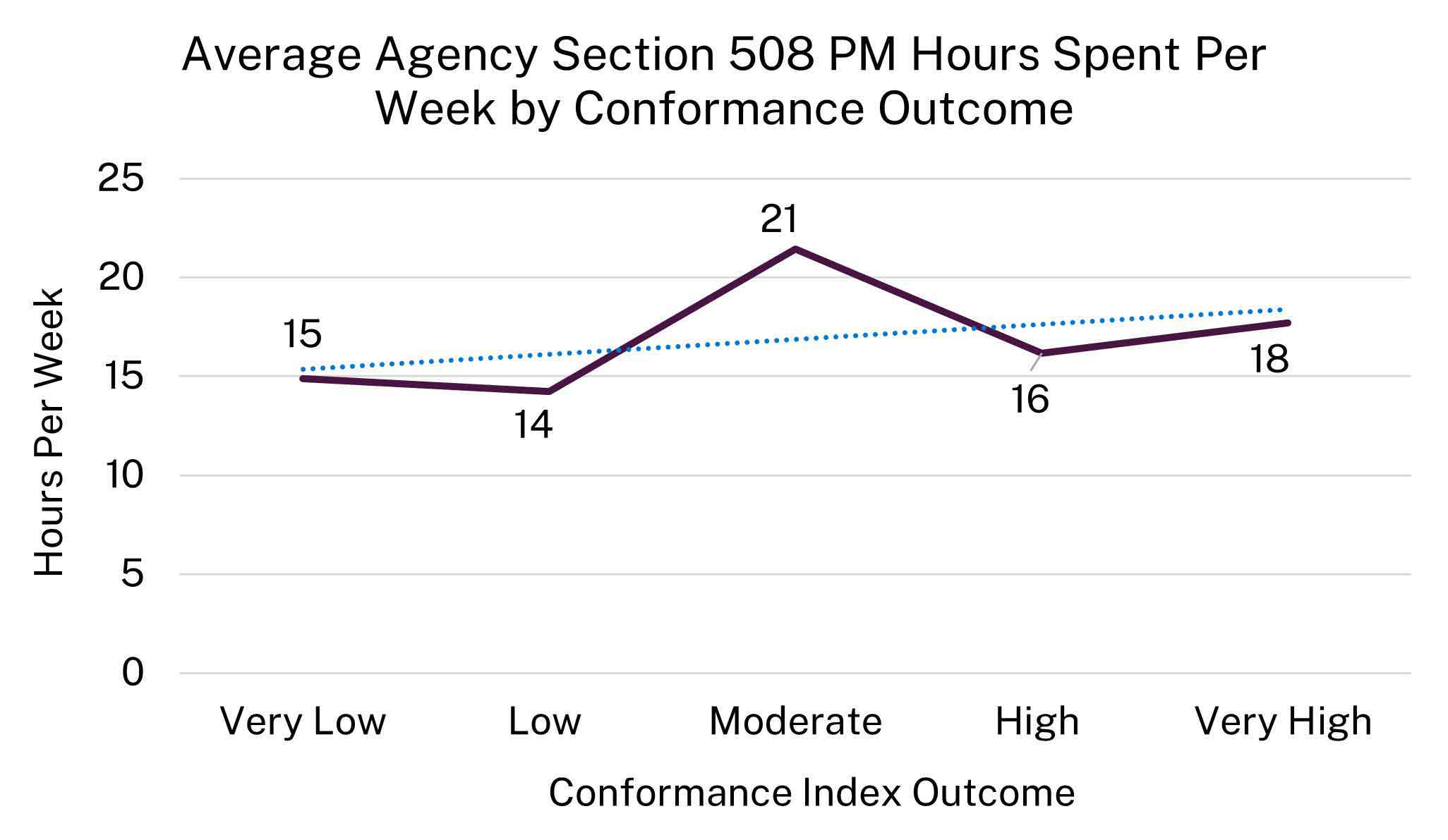

Taken together with conformance outcomes, Figure 15 shows that there is a slight positive relationship between the average hours per week an agency Section 508 PM dedicates to program activities and the conformance outcomes for that agency. Agencies tend to have more conformant ICT when the Section 508 PM can dedicate more time to the Section 508 program.

Agency Staffing

Among the 60 agencies:Component Staffing

Across 152 components from 12 parent agencies:Management of ICT Accessibility Across the Agency

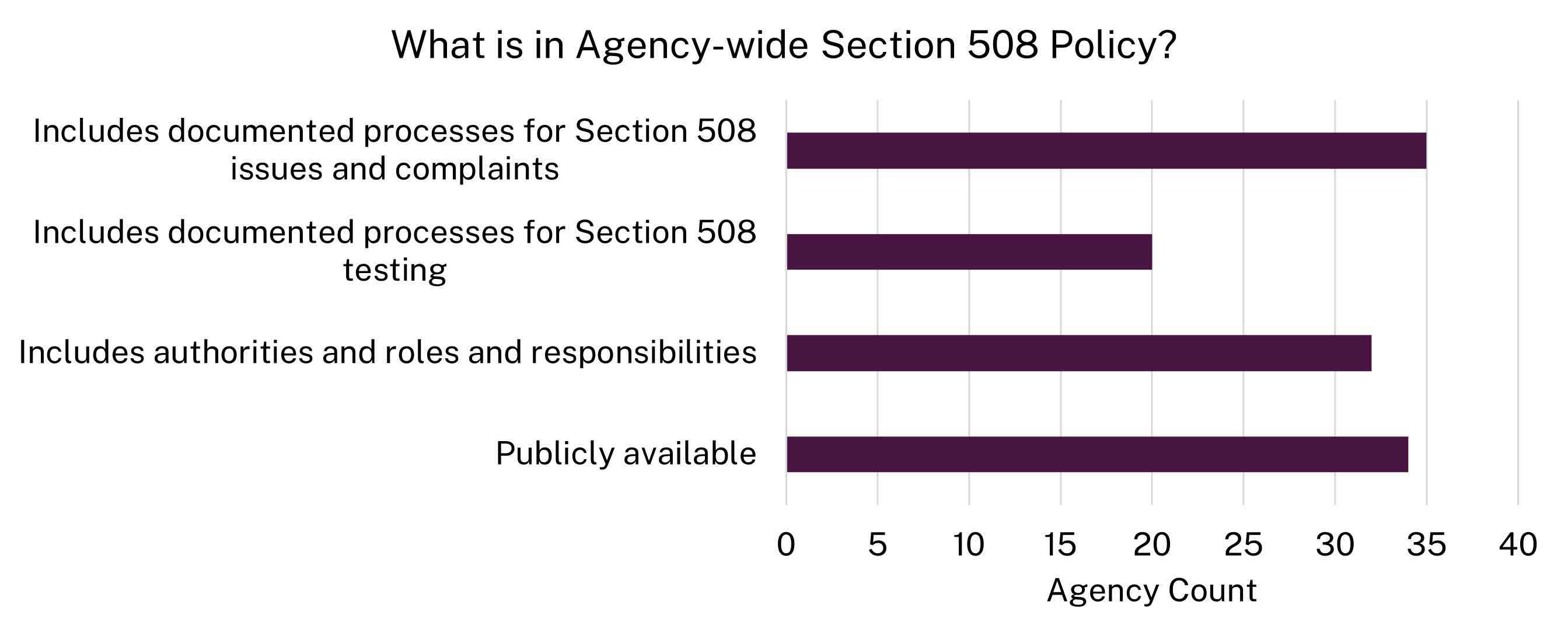

A Section 508 or ICT accessibility policy provides the governance foundation agencies need to comply with federal accessibility requirements. It defines authorities, roles, responsibilities, expectations, and processes that ensure ICT accessibility is embedded across procurement, development, content creation, and IT operations. Most agencies have a Section 508 policy, but not all:

Of the 48 that have a policy:

All 12 agencies with components reported having an agency-wide Section 508 or ICT accessibility policy. Eighty-eight components, or 58 percent, reported having a component-level Section 508 or ICT accessibility policy in addition to the agency-wide policy.

Submitted data illustrate how agencies and components allocate staff, contracting, and technical resources to Section 508 compliance:

Training

Effective Section 508 implementation depends on a workforce that understands ICT accessibility requirements and how to apply them throughout the ICT lifecycle. Responsibility for accessibility spans acquisition staff, designers, developers, testers, content authors, and program managers. Without consistent training, agencies incur higher remediation costs and continue to deploy inaccessible technology and digital content.

Assessment results show that enterprise-wide Section 508 training is uncommon. Only 16 agencies (27 percent) and 38 components (25 percent) reported mandatory Section 508 training, meaning that roughly 75 percent of federal agencies lack a baseline requirement for accessibility awareness or skills.

Even when training is required, frequency is inconsistent. Among the 16 agencies with mandatory training, most require annual training, but the others require only one-time or irregular training. Component-level responses show a similar pattern, with variation in whether training is annual, one-time, or unspecified. Some components require Section 508 training, even when their parent agencies do not, creating inconsistent standards within the same agency.

Role-specific training is also limited, despite its importance to compliance. Only a minority of agencies require additional training for key roles:

Overall, 55 percent of agencies require none of these groups to take role-specific Section 508 training, despite their direct responsibility for accessibility implementation and conformance outcomes. Component-level data reflects a nearly identical pattern.

Complaints

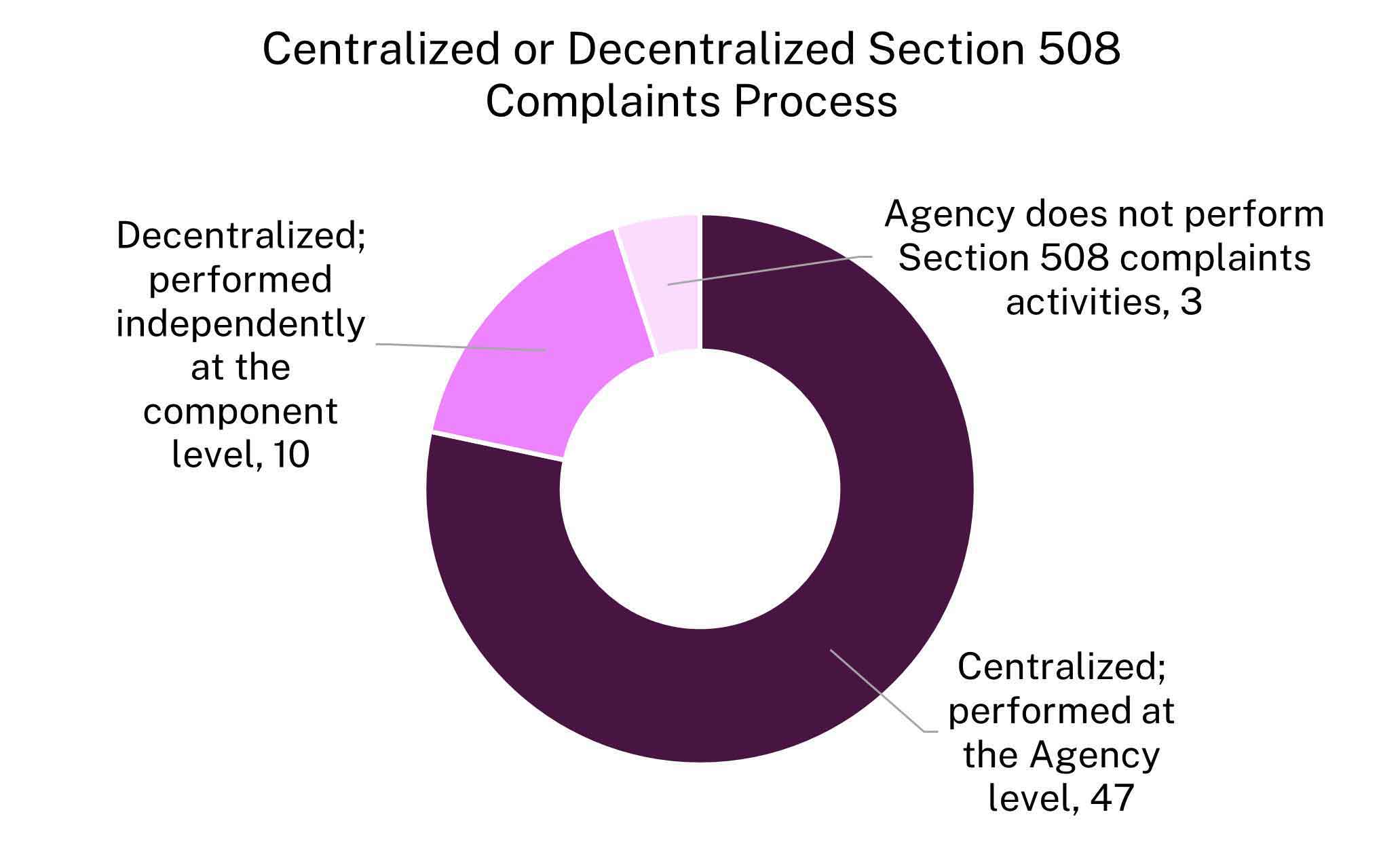

Under Section 508 of the Rehabilitation Act (29 U.S.C. § 794d(f)(2)), agencies receiving Section 508 complaints must apply the complaint procedures established under Section 504 for resolving allegations of discrimination in federally conducted programs or activities. Agencies and components organize Section 508-related complaints differently, with some centralizing these activities and others delegating responsibility to components.

Among the 60 reporting agencies, 47 agencies centralize Section 508 complaint processing at the agency or parent-level, while 10 agencies use a decentralized approach, allowing components to process complaints independently. **Three agencies reported that they do not perform any Section 508-related complaint activities.** Figure 17 illustrates the distribution of centralized and decentralized complaint processes.

At the component level, 100 components across 10 parent agencies reported that they process Section 508 complaints independently, either as part of a decentralized model or in addition to the parent agency. Of these, three parent agencies reported that they centralize complaint processing, while some of their components also perform the activity independently or in addition to a centralized agency process. This hybrid approach indicates that complaint handling often occurs at multiple organizational levels, increasing the importance of coordination and consistent oversight.

Most agencies have mechanisms to track complaints, but gaps remain. Fifty-one agencies reported having a process to track Section 508-related complaints, while nine agencies reported no tracking process, limiting visibility into complaint trends, resolution timelines, and recurring accessibility issues.

Of the 15 agencies that received Section 508-related complaints within the past 365 days (from agency submission):

Best Practices and Remaining Challenges

Over the past year, some agencies reported progress in advancing Section 508 compliance through targeted Section 508 policy updates, expanded training, and stronger governance structures. Several agencies appointed full-time Section 508 PMs, updated or issued agency-wide accessibility policies, and launched strategic plans to guide implementation. Agencies also expanded internal guidance, including updated Web Content Accessibility Guidelines 2.2 interpretation materials, self-service resources and centralized knowledge libraries. The use of data-driven metrics, dashboards, and early-stage testing practices improved agencies’ ability to identify accessibility issues sooner and reduce downstream defects. Increased role-based training and broader adoption of mandatory courses further supported enterprise-wide awareness and implementation.

Despite this progress, agencies continue to face significant constraints. Limited funding, staffing shortages, and workforce turnover reduce the capacity to conduct systematic testing and sustain institutional knowledge. In decentralized environments, insufficient coordination and staffing at both agency and component levels hinder consistent and effective ICT implementation across the federal government. Agencies also report cultural and governance challenges, including uneven leadership support, limited accountability mechanisms, and difficulty keeping pace with evolving accessibility standards. Without sustained investment, clearer governance, and centralized support, agencies will continue to struggle to scale accessibility efforts and deliver consistently accessible digital services.

Recommendations

Recommendations to Congress

Update and clarify Section 508 statutory requirements

Update Section 508 of the Rehabilitation Act (29 U.S.C. § 794d) and 29 U.S.C. § 794d-1 to clearly define which federal agencies are subject to Section 508, which will improve enforceability and clarify agency response requirements for the annual assessment.

Clarify and align ICT accessibility reporting requirements under 29 U.S.C. §§ 794d and 794d-1 to eliminate duplicative reporting and reduce unnecessary agency burden.

Strengthen enforcement and accountability for Section 508 compliance

Explore legislative options to improve enforcement of Section 508 compliance across the federal government, recognizing that overall compliance remains low.

Consider oversight and enforcement approaches used in cybersecurity, such as risk-based authorization to operate (ATO), continuous monitoring, and mandatory reporting under FISMA.

Increase congressional oversight of Section 508 implementation

Examine compliance gaps and better understand challenges, risks and successful practices.

Request updates from agency heads on corrective actions planned or underway to improve Section 508 compliance over the next year.

Use assessment findings, Office of the Inspector General reports, and agency independent validation results to inform congressional oversight of agency acquisition practices and to assess the accessibility of major software vendors and IT service providers whose products are widely deployed across government.

Recommendations to Federal Agencies

Strengthen leadership support and accountability for Section 508 compliance

Reinforce that Chief Information Officers (CIO) lead the integration of Section 508 across the ICT lifecycle, embedding accessibility controls into existing processes, infrastructure, and governance using current staff and resources.

Include Section 508-related metrics in CIO performance plans.

Integrate Section 508 into core risk management frameworks

Treat ICT accessibility as an integral part of agencies’ already established security, privacy, and risk management lifecycles.

Integrate Section 508 compliance into the risk analysis for major ICT investments and ATO reviews to ensure ICT accessibility is a part of core IT governance.

Use acquisition as a primary lever for Section 508 compliance

Leverage the buying power of the federal government by prioritizing “buy over build” and evaluating ICT through accessibility conformance reports (ACRs) to validate the accuracy of vendor conformance claims.

Systematically track and document all exceptions, including “Best Meets” determinations, and use this data to inform contract reviews, identify recurring accessibility gaps, and guide procurement decisions.

Incorporate accessibility performance into contract renewals, past performance evaluations, and future award considerations.

Reject contract deliverables that fail to meet Section 508 requirements to ensure agencies only pay for ICT products and services that meet federal standards and contractual obligations.

Require all ICT contracts to include defined testing methodologies and right-to-repair provisions to ensure vendors fix, replace, or correct non-conforming products at their own expense.

Verification and enforcement steps lag with almost half of all agencies only “sometimes”, “rarely”, or “never” verifying contract deliverables for Section 508 conformance.

Strengthen and optimize Section 508 resourcing and governance

Leverage shared services, federal buying power, common tools, and cross-government expertise to improve ICT accessibility outcomes at lower cost and to deliver greater value to the taxpayer.

Prioritize the procurement and use of accessible authoring tools to enable the creation of accessible content by default, reducing the introduction of accessibility defects at the source, lowering or eliminating remediation and rework costs, and accelerating delivery of accessible digital content and services.

Forty-three agencies failed to respond to the FY 2025 assessment and more than half of responding agencies cited resource limitations.

Require annual role-based Section 508 training

Require annual Section 508 training for all employees who create, maintain, or contribute to agency ICT by embedding accessibility training by roles and responsibilities into mandatory onboarding and annual learning requirements.

55% of agencies do not provide Section 508 training tailored to specific roles despite the fact that key personnel, including those in acquisitions, developers, document authors, web content managers, and testing staff, have direct responsibility for Section 508 compliance.Use guidance and training resources on Section508.gov to integrate Section 508 principles, checkpoints, and accessibility risk awareness into training related to content authoring, generative artificial intelligence (AI), automation, data science, and digital modernization.

Ensure personnel whose position descriptions include responsibility for handling Section 508-related complaints complete role-specific training on intake, triage, documentation, and resolution workflows, including coordination with civil rights, legal, and ICT accessibility offices.

Identify the highest-priority training gaps using compliance findings, complaint trends, and internal audit results, and address them through targeted upskilling via role-based courses, hands-on workshops and labs, and communities of practice.

Expand Section 508 conformance validation and remediation

Expand automated and manual Section 508 conformance testing, validation, and defect remediation before deployment.

Apply a risk-based approach that prioritizes high-impact and high-use ICT, with targeted manual testing and remediation efforts for ICT supporting public-facing information, services, benefits, and programs.

Align agency testing methodologies with the Baselines for Web and Electronic Documents to ensure comprehensive coverage of requirements and support the effective use of generative AI.

Leverage existing AI tools and train staff to configure and prompt these tools to automatically generate, evaluate, and remediate digital content for conformance to Section 508 standards.

The average conformance continues to be under 2 on a 5-point conformance index scale with an average of 23% of agencies not able to test top viewed ICT.Generative AI systems produce new content, such as text, images, or documents. Agencies may leverage generative AI to create electronic documents or web pages. To ensure content generated or assisted by AI consistently adheres to Section 508 requirements before publication or deployment, it is beneficial if agency testing methodologies are aligned with the Baselines for evaluation.

Agency Summary Reports

Comprehensive submission data by agency, parent agency, and component can be found at Section508.gov under Assessment & Data Downloads. In addition, a supplemental data dictionary details the assessment criteria, answer selections, dependencies, “understanding content” and variable identifiers.

Each agency summary page contains self-reported data. However, several data points have also been transformed for analysis:

- Overall Performance, consisting of:

Table #1: Performance Outcome Range Bracket Value Range Very High > 4 to 5 High > 3 to 4 Moderate > 2 to 3 Low > 1 to 2 Very Low 0 to 1 - Accessibility Implementation: This measures an agency’s Section 508 implementation across policy, acquisition and procurement, and testing and remediation. This outcome range consists of an index using a scale from 0 to 5, with 0 representing very low implementation and 5 representing very high implementation. For more details on criteria, weight, and scoring, refer to Appendix A: Methods.

- Accessibility Conformance: This measure of an agency’s conformance of ICT based on responses to nine criteria. This outcome range consists of an index using a scale from 0 to 5, with 0 representing very low conformance and 5 representing very high conformance. For more details on criteria, weight, and scoring, refer to Appendix A: Methods.

Table #1: Performance Outcome Range Bracket Value Range Very High > 4 to 5 High > 3 to 4 Moderate > 2 to 3 Low > 1 to 2 Very Low 0 to 1 - Visual of where the agency falls on the overall performance quadrant for accessibility implementation and conformance, with very low in the bottom left corner and very high in the top right corner.

- Federal accessibility factors consolidate outcomes from four distinct assessment sections to determine performance levels. For more details on criteria, weight, and scoring, refer to Appendix A: Methods.

Agencies are listed in alphabetical order.

Chief Financial Officers (CFO) Act Agencies

Department of Agriculture (USDA)

- Department of Agriculture (USDA)

- Agricultural Marketing Service (AMS)

- Agricultural Research Service (ARS)

- Animal and Plant Health Inspection Service (APHIS)

- Economic Research Service (ERS)

- Executive Operations (USDA)

- Farm Production and Conservation (FPC)

- Farm Service Agency (FSA)

- Food and Nutrition Service (FNS)

- Food Safety and Inspection Service (FSIS)

- Foreign Agricultural Service (FAS)

- Forest Service (USFS)

- National Agricultural Statistics Service (NASS)

- National Institute of Food and Agriculture (NIFA)

- Natural Resources Conservation Service (NRCS)

- Office of Chief Financial Officer (USDA)

- Office of Chief Information Officer (USDA)

- Office of Civil Rights (USDA)

- Office of Inspector General (USDAOIG)

- Office of the General Counsel (USDA)

- Office of the Secretary (USDA)

- Risk Management Agency (RMA)

- Rural Business-Cooperative Service (RBCS)

- Rural Development (RD)

- Rural Housing Service (RHS)

- Rural Utilities Service (RUS)

Department of Commerce (DOC)

- Department of Commerce (DOC)

- Bureau of Census (CEN)

- Economic Development Administration (EDA)

- International Trade Administration (ITA)

- National Institute of Standards and Technology (NIST)

- National Oceanic and Atmospheric Administration (NOAA)

- National Telecommunications and Information Administration (NTIA)

- U.S. Patent and Trademark Office (USPTO)

Department of Education (ED)

Department of Energy (DOE)

- Department of Energy (DOE)

- National Nuclear Security Administration (NNSA)

- Power Marketing Administration (DOE)

Department of Health and Human Services (HHS)

- Department of Health and Human Services (HHS)

- Administration for Children and Families (ACF)

- Administration for Community Living (ACL)

- Advanced Research Projects Agency for Health (ARPAH)

- Agency for Healthcare Research and Quality (AHRQ)

- Centers for Disease Control and Prevention (CDC)

- Centers for Medicare and Medicaid Services (CMS)

- Food and Drug Administration (FDA)

- Health Resources and Services Administration (HRSA)

- Indian Health Service (IHS)

- National Institutes of Health (NIH)

- Office of the Inspector General (HHSOIG)

- Office of the Secretary (HHS)

- Substance Abuse and Mental Health Services Administration (SAMHSA)

Department of Homeland Security (DHS)

- Department of Homeland Security (DHS)

- Analysis and Operations (HHS)

- Countering Weapons of Mass Destruction Office (CWMD)

- Cybersecurity and Infrastructure Security Agency (CISA)

- Federal Emergency Management Agency (FEMA)

- Federal Law Enforcement Training Center (FLETC)

- Management Directorate (DHS)

- Office of Health Affairs (DHS)

- Office of the Inspector General (DHSOIG)

- Office of the Secretary and Executive Management (DHS)

- Science and Technology (ST)

- Transportation Security Administration (TSA)

- U.S. Customs and Border Protection (CBP)

- U.S. Immigration and Customs Enforcement (ICE)

- United States Coast Guard (USCG)

- United States Secret Service (USSS)

- Citizenship and Immigration Services (USCIS)

Department of Housing and Urban Development (HUD)

Department of Justice (DOJ)

- Department of Justice (DOJ)

- Bureau of Alcohol, Tobacco, Firearms, and Explosives (ATF)

- Drug Enforcement Administration (DEA)

- Federal Bureau of Investigation (FBI)

- Federal Prison System (BOP)

- Legal Activities and U.S. Marshals (USMS)

- National Security Division (NSD)

- Office of Justice Programs (OJP)

Department of Labor (DOL)

- Department of Labor (DOL)

- Bureau of Labor Statistics (BLS)

- Employee Benefits Security Administration (EBSA)

- Employment and Training Administration (ETA)

- Mine Safety and Health Administration (MSHA)

- Occupational Safety and Health Administration (OSHA)

- Office of Federal Contract Compliance Programs (OFCCP)

- Office of Labor Management Standards (OLMS)

- Office of Workers' Compensation Programs (OWCP)

- Veterans' Employment and Training Service (VETS)

- Wage and Hour Division (WHD)

Department of State (STATE)

Department of the Interior (DOI)

- Department of the Interior (DOI)

- Bureau of Indian Affairs (BIA)

- Bureau of Indian Education (BIE)

- Bureau of Land Management (BLM)

- Bureau of Ocean Energy Management (BOEM)

- Bureau of Reclamation (BOR)

- Bureau of Safety and Environmental Enforcement (BSEE)

- Bureau of Trust Funds Administration (BTFA)

- Central Utah Project (DOI)

- Departmental Offices (DOI)

- Insular Affairs (DOI)

- National Indian Gaming Commission (NIGC)

- National Park Service (NPS)

- Office of Inspector General (DOIOIG)

- Office of Surface Mining Reclamation and Enforcement (OSMRE)

- Office of the Solicitor (DOI)

- United States Fish and Wildlife Service (FWS)

- United States Geological Survey (USGS)

Department of the Treasury (TREAS)

- Department of the Treasury (TREAS)

- Alcohol and Tobacco Tax and Trade Bureau (ATTTB)

- Bureau of Engraving and Printing (BEP)

- Comptroller of the Currency (OCC)

- Departmental Offices (TREAS)

- Federal Financing Bank (FFB)

- Financial Crimes Enforcement Network (FINCEN)

- Fiscal Service (BFS)

- Internal Revenue Service (IRS)

- United States Mint (MINT)

Department of Transportation (DOT)

- Department of Transportation (DOT)

- Federal Aviation Administration (FAA)

- Federal Highway Administration (FHWA)

- Federal Motor Carrier Safety Administration (FMCSA)

- Federal Railroad Administration (FRA)

- Federal Transit Administration (FTA)

- Great Lakes St. Lawrence Seaway Development Corporation (STLSDC)

- Maritime Administration (MA)

- National Highway Traffic Safety Administration (NHTSA)

- Office of Inspector General (DOTOIG)

- Pipeline and Hazardous Materials Safety Administration (PHMSA)

Department of Veterans Affairs (VA)

- Department of Veterans Affairs (VA)

- Benefits Programs (BP)

- Department-Wide Programs (DP )

- Departmental Administration (DA)

- Veterans Health Administration (VHA)

Department of War (DOW)

- Department of War (DOW)

- Defense Acquisition University (DAU)

- Defense Advanced Research Projects Agency (DARPA)

- Defense Commissary Agency (DECA)

- Defense Contract Audit Agency (DCAA)

- Defense Contract Management Agency (DCMA)

- Defense Counterintelligence and Security Agency (DCSA)

- Defense Finance and Accounting Service (DFAS)

- Defense Health Agency (DHA)

- Defense Human Resources Activity (DHRA)

- Defense Information Systems Agency (DISA)

- Defense Intelligence Agency (DIA)

- Defense Legal Services Agency (DOD)

- Defense Logistics Agency (DLA)

- Defense Media Activity (DMA)

- Defense POW/MIA Accounting Agency (DPAA)

- Defense Security Cooperation Agency (DSCA)

- Defense Technical Information Center (DTIC)

- Defense Technology Security Administration (DTSA)

- Defense Threat Reduction Agency (DTRA)

- Department of the Air Force (USAF)

- Department of the Army (ARMY)

- Department of the Navy (NAVY)

- DOD Education Activity (DODEA)

- Joint Staff (JS)

- Missile Defense Agency (MDa)

- National Defense University (NDU)

- National Geospatial-Intelligence Agency (NGIA)

- National Guard Bureau (NGB)

- National Reconnaissance Office (NRO)

- National Security Agency/Central Security Service (NSA)

- Pentagon Force Protection Agency (PFPA)

- Washington Headquarters Services (WHS)

Environmental Protection Agency (EPA)

General Services Administration (GSA)

National Aeronautics and Space Administration (NASA)

National Science Foundation (NSF)

Nuclear Regulatory Commission (NRC)

Office of Personnel Management (OPM)

Small Business Administration (SBA)

Social Security Administration (SSA)

Small and Independent Agencies

- Access Board (USAB)

- Administrative Conference of United States (ACUS)

- Advisory Council on Historic Preservation (ACHP)*

- American Battle Monuments Commission (ABMC)

- Barry Goldwater Scholarship and Excellence in Education Foundation (BGSEEF)

- Board of Governors of the Federal Reserve (BGFR)*

- Bureau of Consumer Financial Protection (CFPB)

- Central Intelligence Agency (CIA)*

- Commission of Fine Arts (CFA)*

- Commission on Civil Rights (CCR)*

- Committee for Purchase From People Who Are Blind or Severely Disabled (CPPBSD)*

- Commodity Futures Trading Commission (CFTC)*

- Consumer Product Safety Commission (CPSC)*

- Corporation for National and Community Service (CNCS)*

- Council of the Inspectors General on Integrity and Efficiency (CIGIE)*

- Court Services and Offender Supervision Agency for the District (CSOSA)

- Defense Nuclear Facilities Safety Board (DNFSB)*

- Delta Regional Authority (DRA)

- Denali Commission (DC)*

- Election Assistance Commission (EAC)

- Equal Employment Opportunity Commission (EEOC)

- Export-Import Bank of the United States (EXIM)

- Farm Credit Administration (FCA)

- Farm Credit System Insurance Corporation (FCSIC)

- Federal Communications Commission (FCC)

- Federal Deposit Insurance Corporation (FDIC)

- Federal Election Commission (FEC)*

- Federal Energy Regulatory Commission (FERC)

- Federal Housing Finance Agency (FHFA)

- Federal Labor Relations Authority (FLRA)

- Federal Maritime Commission (FMC)

- Federal Mediation and Conciliation Service (FMCS)*

- Federal Mine Safety and Health Review Commission (FMSHRC)

- Federal Retirement Thrift Investment Board (FRTIB)*

- Federal Trade Commission (FTC)

- Gulf Coast Ecosystem Restoration Council (GCERC)

- Harry S Truman Scholarship Foundation (HSTSF)*

- Institute of Museum and Library Services (IMLS)*

- Inter-American Foundation (IAF)*

- James Madison Memorial Fellowship Foundation (JMMFF)

- Japan-United States Friendship Commission (JUSFC)*

- Marine Mammal Commission (MMC)*

- Merit Systems Protection Board (MSPB)*

- Millennium Challenge Corporation (MCC)

- Morris K. Udall and Stewart L. Udall Foundation (MUSUF)

- National Archives and Records Administration (NARA)

- National Capital Planning Commission (NCPC)*

- National Council on Disability (BCD)*

- National Credit Union Administration (NCUA)

- National Endowment for the Arts (NEA)

- National Endowment for the Humanities (NEH)*

- National Labor Relations Board (NLRB)

- National Mediation Board (NMB)

- National Security Agency (NSA)*

- National Transportation Safety Board (NTSB)

- Northern Border Regional Commission (NBRC)*

- Nuclear Waste Technical Review Board (NWTRB)*

- Occupational Safety and Health Review Commission (OSHRC)*

- Office of Government Ethics (OGE)

- Office of Navajo and Hopi Indian Relocation (ONHIR)*

- Office of Special Counsel (OSC)*

- Peace Corps (PC)*

- Pension Benefit Guaranty Corporation (PBGC)

- Postal Regulatory Commission (PRC)

- Postal Service (USPS)

- Presidio Trust (PT)*

- Privacy and Civil Liberties Oversight Board (PCLOB)*

- Railroad Retirement Board (RRB)*

- Securities and Exchange Commission (SEC)

- Selective Service System (SSS)

- Southeast Crescent Regional Commission (SCRC)*

- Southwest Border Regional Commission (SWBRC)*

- Tennessee Valley Authority (TVA)

- Trade and Development Agency (USTDA)

- U.S. Agency for Global Media (USAGM)*

- United States Holocaust Memorial Museum (USHMM)*

- United States Institute of Peace (USIP)*

- United States Interagency Council on Homelessness (USICH)*

- United States International Development Finance Corporation (DFC)

- United States International Trade Commission (USITC)*

Appendix A: Methods

Considering the substantial revisions to the evaluation standards and the evolving federal landscape, FY 2025 serves as a new benchmark for ICT accessibility across the federal government. The methods outlined in this section are the result of detailed discussions and activities between GSA, OMB, and the U.S. Access Board. Agencies, parent agencies, and components self-reported the data in this report. No independent validation or external data was utilized.

Comprehensive submission data by agency, parent agency, and component can be found at Section508.gov under Assessment & Data Downloads. In addition, a supplemental data dictionary details the assessment criteria, answer selections by criteria, dependencies, “understanding” content and variable identifiers.

Development, Dissemination, and Collection of Assessment Criteria

OMB, in coordination with GSA and the U.S. Access Board, significantly revised this year's assessment criteria. The criteria focused on the following four categories:

This year, components were not required to submit conformance information; the communicated intent was for agencies to include component data as part of their conformance reporting. Components answered questions only from the perspective of their component and had the option to answer questions under the acquisition and procurement and testing and remediation categories only if those activities were performed independently from or in addition to the parent agency.

OMB distributed instructions and assessment criteria to Section 508 Program Managers (PM) and Section 508 Points of Contact on April 30, 2025 while simultaneously releasing the instructions and criteria on a Max.gov page. See Table A1 for a list of the four assessment categories that the criteria cover. OMB, GSA, and the U.S. Access Board conducted 11 office hours to share knowledge and address inquiries, including three submission tool demonstration sessions to showcase tool efficiencies and best practices. In addition, two office hours were held for parent agencies with components to demonstrate the additional review required for component submissions by the parent agency prior to being released to OMB.

The “past 365 days” specified in the criteria generally refers to the 365 days prior to the agency's submission date.

The assessment team implemented a new collection tool this year using OMB Collect, which included multiple features for more efficient data collection and review queues for component- and agency-level points of contact. The collection tool's release, initially set for June 1, 2025, was postponed until July 31, 2025. As a result, OMB extended the submission deadline from August 1, 2025 to September 5, 2025.

| Assessment Category | Description |

|---|---|

| Section 508 Management | Information related to the Section 508 program activities, including policy, accessibility integration, staffing, budget, and training. |

| Acquisition and Procurement | Extent to which ICT accessibility is included in the acquisition lifecycle and vendor requirements. |

| Testing and Remediation | Extent to which ICT accessibility is included in testing, tracking of defects and risks, and remediation actions. |

| Conformance | Specific data points and outcomes related to measuring the agency or parent agency’s conformance to the ICT Standards and Guidelines of tested ICT and Section 508-related complaints. |

Descriptive Analysis

Based on submitted agency and component data, overall performance was assessed by four factors:

Policy Integration

Acquisition and Procurement

Testing and Remediation

Accessibility Conformance

Table A2 and Table A3 describe each factor and responses that determine factor outcomes for agencies and components, respectively.

| Factor | Description | Agency Associated Questions | Weight |

|---|---|---|---|

| Policy Integration | Extent to which ICT accessibility is integrated in policies and business functions. |

Q15a Q15b Q15c Q15d Q15e Q15f Q15g Q15h Q15i |

Each question weighted equally at 11.11%. |

| Acquisition and Procurement | Extent to which ICT accessibility is included in the acquisition lifecycle and vendor requirements. |

Q22 Q23 Q24 Q25 Q26 Q27 |

Each question weighted equally at 16.66%. |

| Testing and Remediation | Extent to which ICT accessibility is included in testing, tracking of defects and risks, and remediation actions. |

Q29a–Q29f Q30a–Q30f Q31a–Q31g Q32a–Q32g Q33a–Q33g |

Each set of questions by ICT weighted equally at 20%. All responses within each set are equally weighted. |

| Accessibility Conformance | Extent to which tested internal and external ICT conforms to Section 508 standards. |

Q29i, Q29k, Q29l Q30i, Q30k, Q30l Q31h, Q31j, Q31k Q32h, Q32j, Q32k Q33h, Q33j, Q33k Top Viewed Public Web Pages Top Viewed Internal Web Pages Top Viewed Public Electronic Documents Top Viewed Videos |

Each set of questions by ICT weighted equally at 11.11%. |

| Factor | Description | Component Associated Questions | Weight |

|---|---|---|---|

| Policy Integration | Extent to which ICT accessibility is integrated in policies and business functions. |

Q13a Q13b Q13c Q13d Q13e Q13f Q13g Q13h Q13i |

Each question weighted equally at 11.11%. |

| Acquisition and Procurement | Extent to which ICT accessibility is included in the acquisition lifecycle and vendor requirements. |

Q20 Q21 Q22 Q23 Q24 Q25 |

Each question weighted equally at 16.66%. |

| Testing and Remediation | Extent to which ICT accessibility is integrated in testing, tracking of defects and risks, and remediation actions. |

Q28a–Q28f Q29a–Q29f Q30a–Q30g Q31a–Q31g Q32a–Q32g |

Each set of questions by ICT weighted equally at 20%. |

First, the assessment team created an Accessibility Implementation or i-index, referred to as “implementation” for every agency, parent agency, and component. This index quantified responses to criteria across three categories (depicted in Tables A2 and Table A3 above): Accessibility Integration, Acquisition and Procurement, and Testing and Remediation. Some questions were “yes” or "no" responses only while others were multiple choice structured. Each question was assigned a numeric value as follows:

- a) = 0; signifying never, no, or not integrated

- b) = 1; signifying rarely or somewhat integrated

- c) = 2; signifying sometimes or moderately integrated

- d) = 3; signifying often or mostly integrated

- e) = 4; signifying almost always, yes, or fully integrated

and

- a) Yes = 4

- b) No = 0

Furthermore, a selection of "Not applicable” or "N/A” received a 4. We chose to do this so all agencies had an equal number of questions to evaluate and no one was penalized with a low value for activities or ICT that do not apply to them.

Each of the three factor areas was summed and weighted equally to create the i-index.

Next, we created an Accessibility Conformance Index or c-index, referred to as "conformance” to assess how well agencies performed in meeting Section 508 and ICT accessibility requirements based on the ICT tested in the last 365 days. This quantified agency responses to nine specific criteria that directly relate to quantifiable compliance outcomes of hardware, software, public facing electronic documents, public web pages, internal web pages, and videos. Components do not have a conformance index.

Using the same logic as above, if an agency reported "No,” they did not have a tracking mechanism for ICT listed in Q29i, Q30i, Q31h, Q32h, or Q33h, they were assigned a "0” for that ICT in Q29l, Q30l, Q31l, Q32k, or Q33k. If an agency selected "N/A” for ICT in Q29i, Q30i, Q31h, Q32h, or Q33h, they were assigned a "1” for that ICT in Q29l, Q30l, Q31l, Q32k, or Q33k.

If an agency did not include any results for Top-Viewed ICT despite having that ICT, they were assigned a "0.” If they did not have that type of ICT, they were assigned a "1”; this ICT may have been selected in previous questions as "Not Applicable,” noted in responses for Q38, or in their submission with a "N/A.” Within the provided results, any Section 508 failure was converted into a "0” for that ICT item. Because of how agencies reported Top-Viewed ICT outcomes, a number of different notations of a failure for each Section 508 standard were included in submissions, including an "X,” numerical value for number of failures, or "FAIL.” Blank cells, "Pass,” "N/A,” or "0” denoted a passing Section 508 standard.

Each question was assigned numerical values and converted as shown in Table A4. Importantly, each index was then scaled to a 5-point scale.

| Topic | Criteria | Conversion Approach |

|---|---|---|

| Hardware | Q29k and 29l | If data was provided for Q29l, the result is displayed as a percentage of total hardware that fully conforms out of total hardware tested. Otherwise, convert to “0” or “1.” |